Insights & Perspectives

Exploring the intersection of digital health, AI, and clinical innovation. Here are my latest thoughts and findings from the field.

May 1 - HealthTech Dose

May 3, 2026

The discussion centers on the dichotomy between expensive, high-bandwidth AI tools and the power of repurposing existing digital grids like the Electronic Health Record (EHR). To succeed in the current landscape, R&D leaders must prioritize invisible AI—systems embedded directly into the foundational plumbing of healthcare—to eliminate data silos, reduce human error, and accelerate recruitment. The key strategic win lies in shifting from “plug-and-play” fantasies toward systems that respect implementation friction and prioritize the human-in-the-loop to ensure patient safety and financial assurance.

Key Takeaways:

Dismantle the Administrative Tax by transitioning from manual “swivel-chair” data entry to direct eSource integration, which has been shown to reduce demographic data capture error rates from 9% to 0%.

Leverage Invisible Infrastructure by utilizing digital grids that connect hundreds of healthcare organizations and millions of de-identified patient records to move data seamlessly between routine care and trial records.

Accelerate Enrollment via “Teleportation” using automated query systems that parse unstructured genomic PDFs and instantly alert clinicians to eligible trial participants during patient visits.

Predict Outcomes with Generative Transformers by employing autoregressive models (like Comet) trained on billions of medical events to predict severe complications before physical symptoms manifest.

Mitigate the Nocebo Effect by maintaining a strict human-in-the-loop mandate; ensuring AI alerts are vetted by clinical review teams to prevent algorithm-induced patient anxiety and attrition.

Future-Proof Regulatory Strategy by engaging with emerging FDA pilot programs to define the evaluation metrics for “model drift” in AI systems that evolve over the course of a multi-year trial.

Show Notes:

[0:00 - 1:15] Milestone Update: The hosts celebrate hitting 100 subscribers and nearly 6k views before introducing the tension between “flashy” AI and “invisible” infrastructural AI.

[1:15 - 2:30] The Administrative Tax: Defining the massive cost of paperwork and the goal of merging clinical care and clinical research into a single, continuous data lake.

[2:30 - 3:45] Structural Misalignment: A critical look at “implementation friction”—why advanced AI often fails in understaffed clinics that lack the time or incentive to use new dashboards.

[3:45 - 5:15] Ending “Swivel-Chair” Transcription: How direct eSource integration eliminates the need for nurses to manually type data from one monitor to another, reaching a 0% error rate.

[5:15 - 6:45] Unlocking the PDF Iceberg: Using Natural Language Processing (NLP) to turn “dead” genomic PDF scans into searchable, actionable biomarker data for precision medicine trials.

[6:45 - 8:30] The Comet Model: Deep dive into “medical time travel”—using decoder-only transformer models to auto-complete a patient’s likely health trajectory based on billions of tokens of history.

[8:30 - 10:00] The Nocebo Risk: A grounded discussion on the psychological dangers of AI; how false-positive alerts sent directly to patients can induce real clinical symptoms or trial dropouts.

[10:00 - 11:30] Human-in-the-Loop: Why clinical review teams are an operational necessity, not just an ethical checkbox, to maintain the therapeutic alliance between doctor and patient.

[11:30 - 13:00] Navigating the FDA: Analyzing the recent RFI on AI pilot programs and the challenge of measuring “model drift” when an algorithm learns and changes over time.

[13:00 - End] Capital Efficiency: Final thoughts on shifting from “buying AI” to “structuring infrastructure” where costs are tied to clinical results rather than implementation friction.

Podcast generated with the help of NotebookLM

Is consensus secretly killing your AI pipeline?

April 28, 2026

HT4LL-20260428

Hey there,

Waiting for the perfect animal model, the perfect data privacy approval, or the perfect committee consensus is the absolute fastest way to kill your pipeline in the AI era.

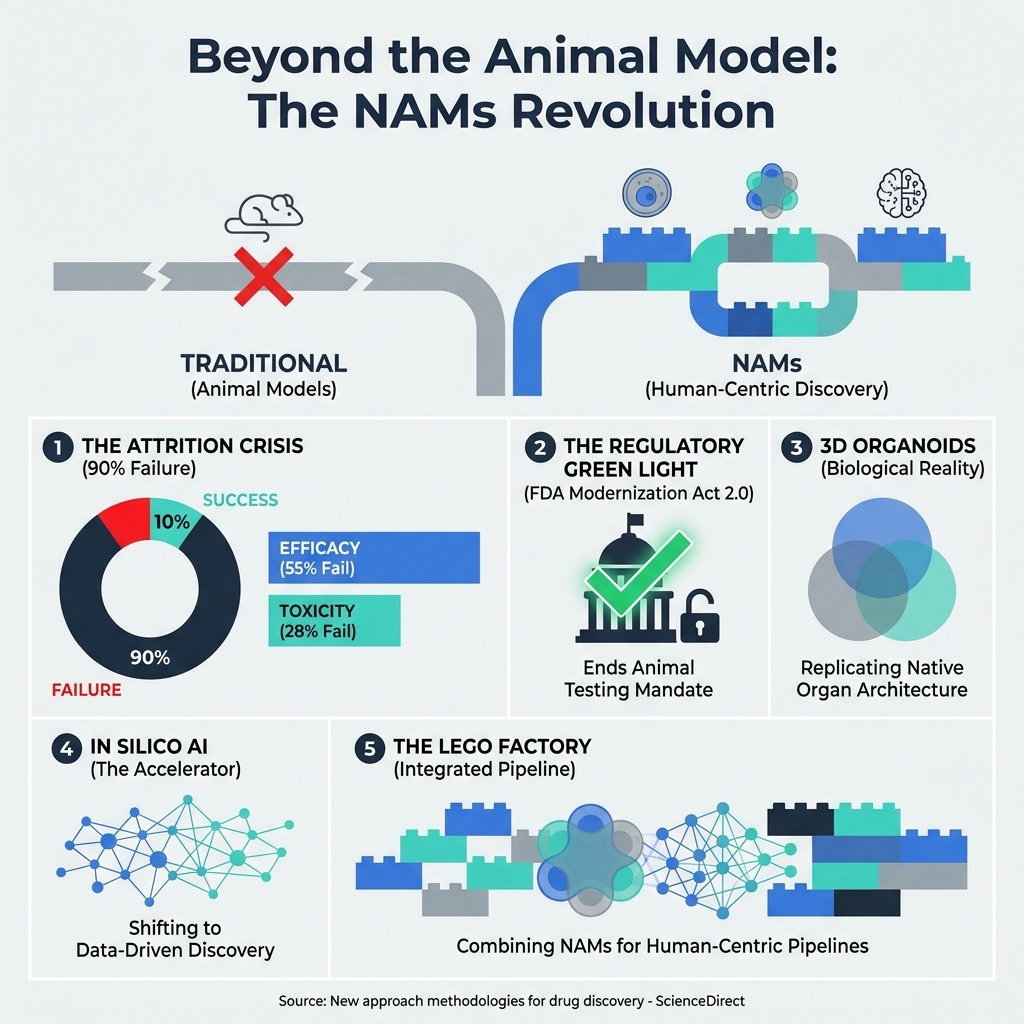

As R&D leaders, we are constantly battling the friction between innovation and legacy infrastructure. We are stuck relying on single-group digital health studies, 90% clinical failure rates driven by fundamentally flawed animal models, and data privacy bottlenecks that stall critical research for months. Worse, when we try to implement cutting-edge AI or wearables to solve these very issues, our decisions get watered down by risk-averse committees practicing “Success Theater.” You need a blueprint to cut through the red tape, shift from reactive to proactive care, and build an agile, human-centric discovery engine.

Today, we are breaking down the essential strategies to rewire your R&D organization for speed, precision, and scale.

Transitioning from flawed animal models to 3D organoids and AI.

Using synthetic data to bypass crippling privacy bottlenecks.

Dismantling consensus culture to empower autonomous, fast-moving teams.

Let’s get right into the insights that are reshaping the industry.

If you’re a Pharma R&D Executive tired of watching your clinical timelines stretch while competitors accelerate, then here are the resources you need to dig into to rebuild your pipeline architecture:

Weekly Resource List:

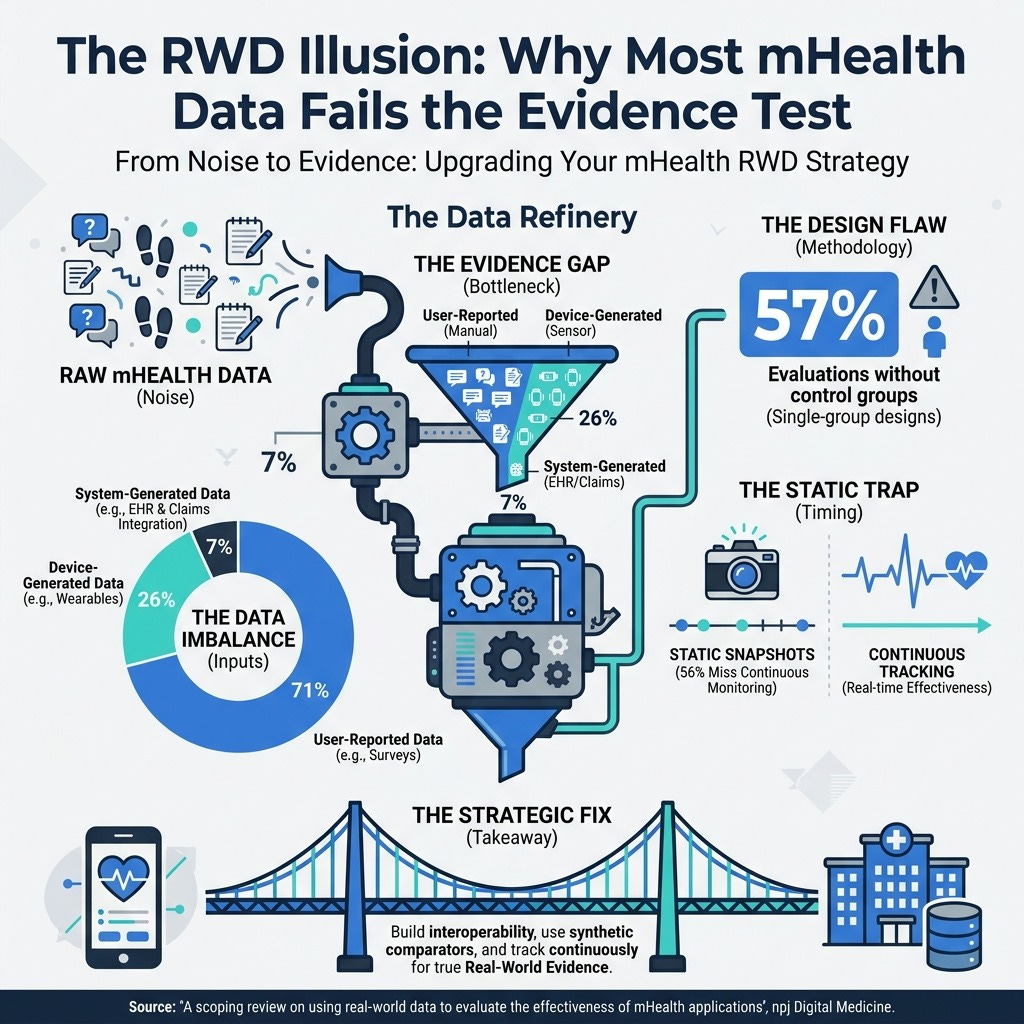

A scoping review on using real-world data to evaluate the effectiveness of mHealth applications | npj Digital Medicine (6 min read) - Most mHealth data fails the evidence test. 57% of evaluations lack control groups and 71% rely on subjective user inputs. The takeaway: You must build foundational interoperability and continuous tracking into your digital tools from day one to generate true Real-World Evidence.

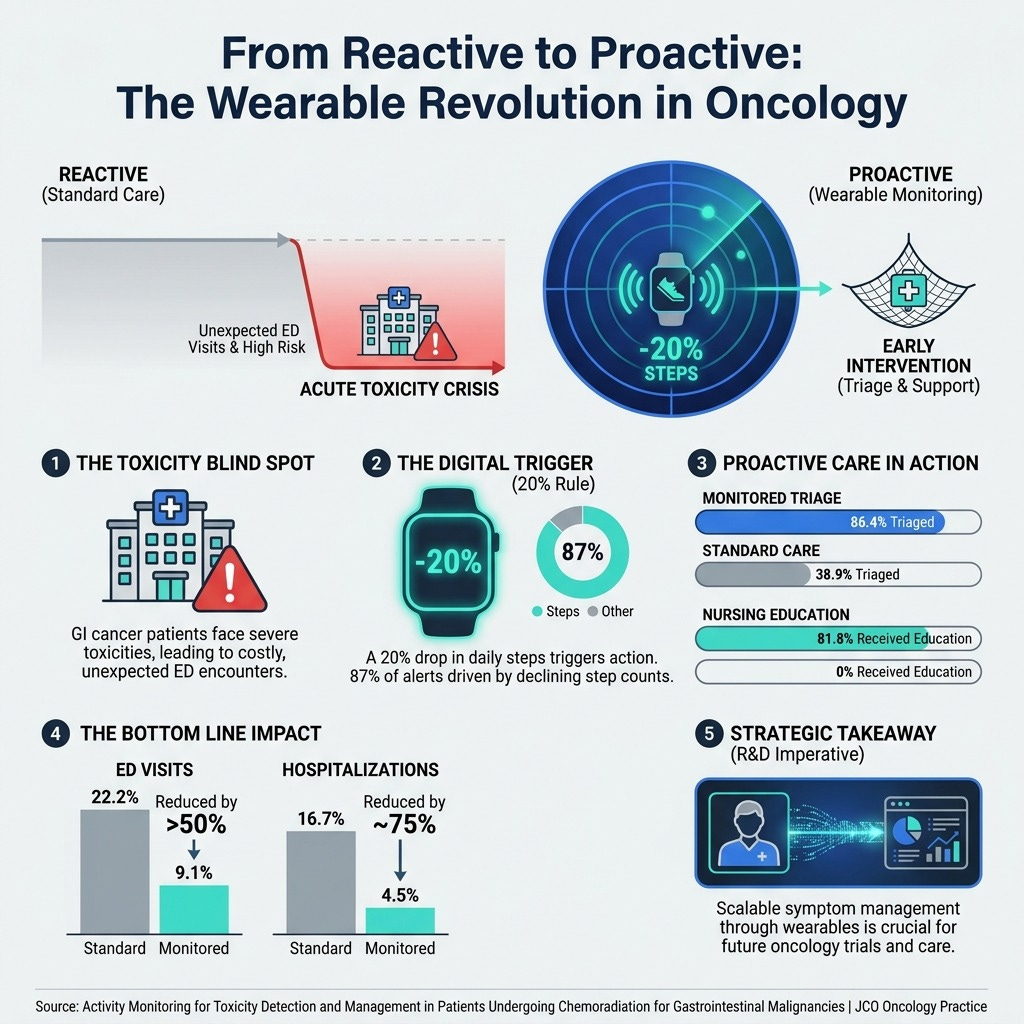

Activity Monitoring for Toxicity Detection and Management in Patients Undergoing Chemoradiation for Gastrointestinal Malignancies | JCO Oncology Practice (8 min read) - A simple 20% drop in daily steps from a smartwatch can predict severe treatment toxicity. Integrating passive activity data shifts cancer care from reactive emergency room visits to early, proactive outpatient triage.

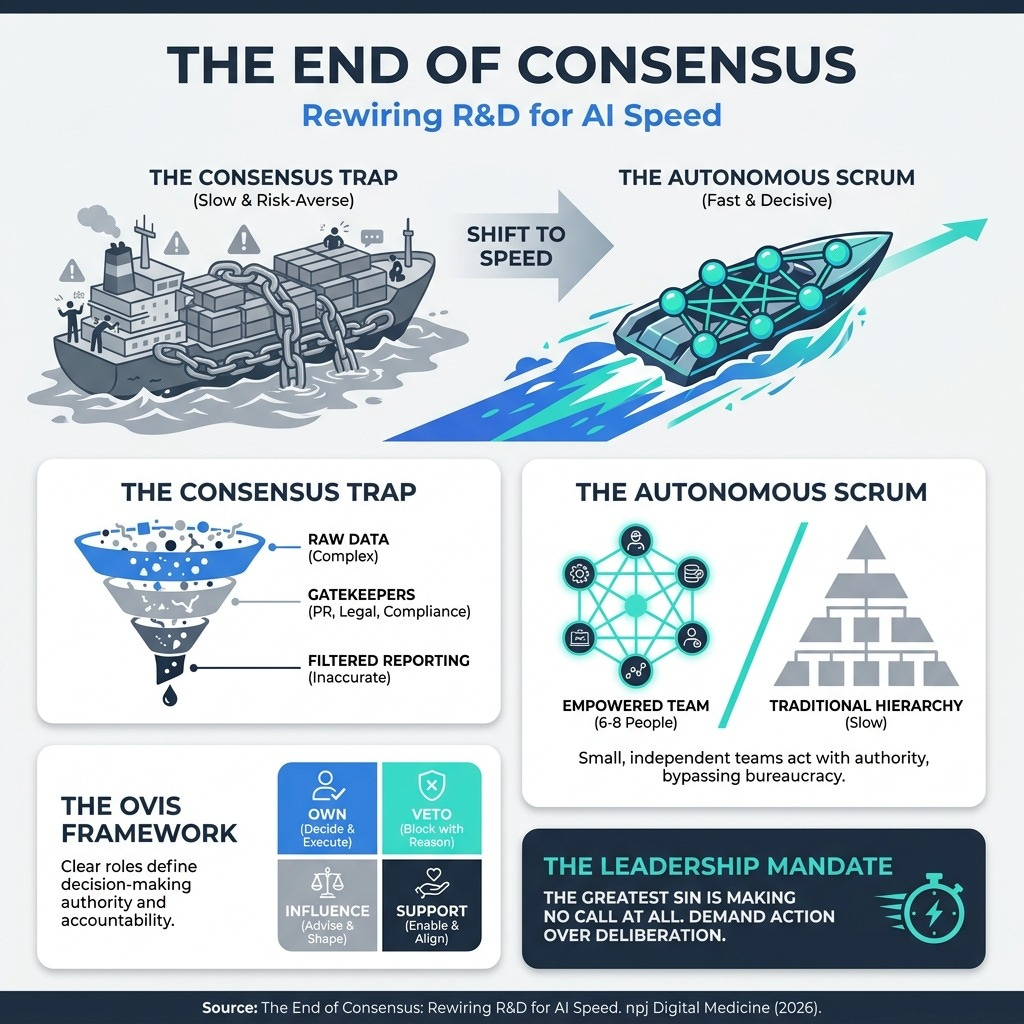

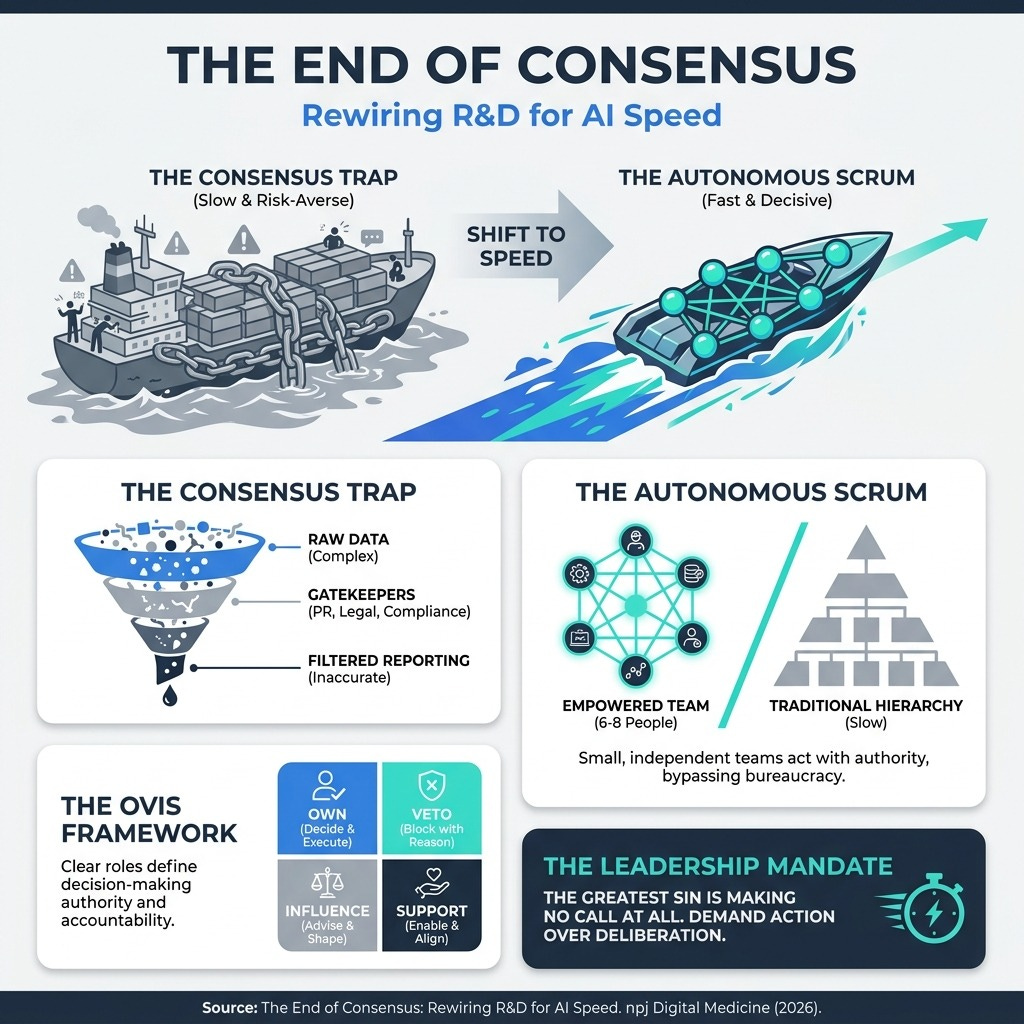

Decision-Making by Consensus Doesn’t Work in the AI Era | Harvard Business Review (7 min read) - Consensus management optimizes for risk mitigation, not speed, leaving the C-suite blind to reality. The takeaway: Implement the OVIS framework (Own, Veto, Influence, Support) and empower 6-to-8-person “Autonomous Scrums” to act immediately.

New approach methodologies for drug discovery | ScienceDirect (10 min read) - Over 90% of clinical drugs fail, largely due to our reliance on non-human animal models. The takeaway: The FDA Modernization Act 2.0 paves the way for human-centric New Approach Methodologies (NAMs). Combine AI, patient-specific stem cells, and 3D organoids to predict efficacy accurately.

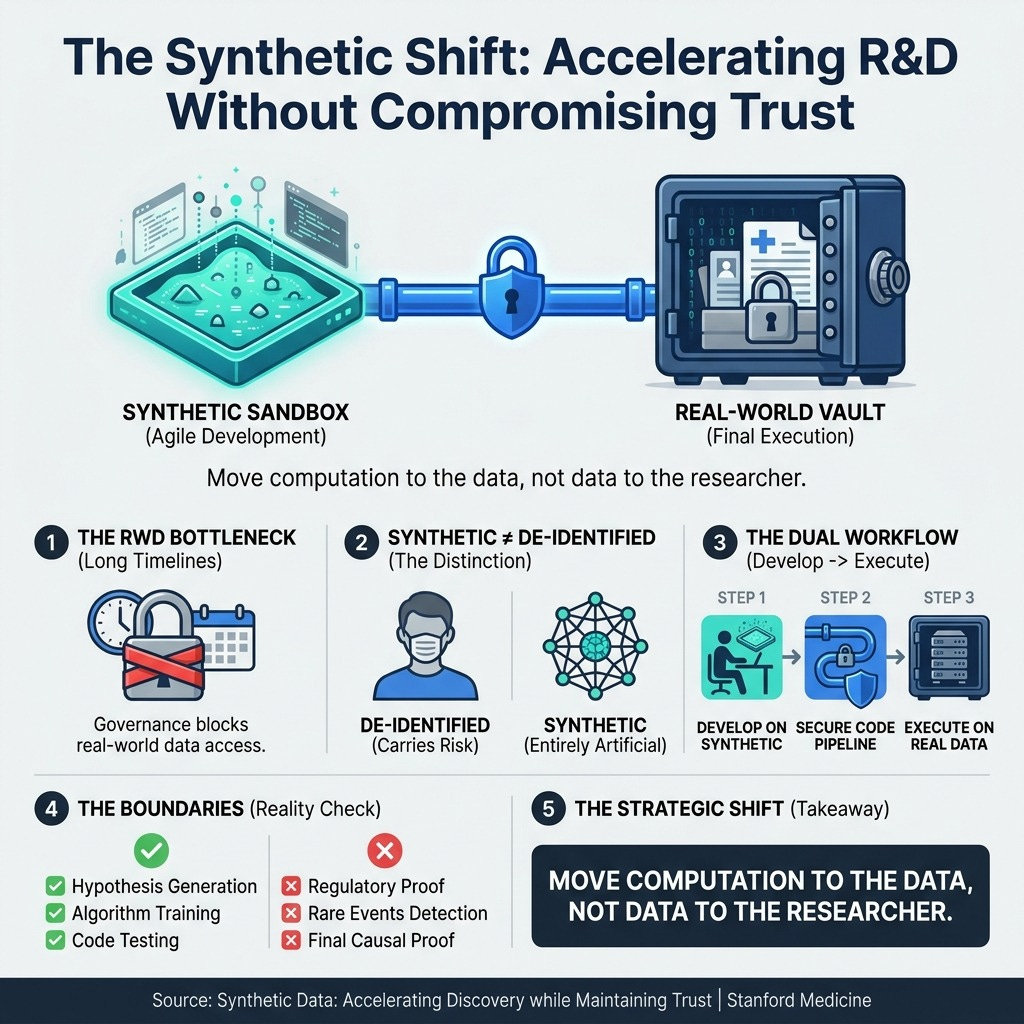

Synthetic Data: Accelerating Discovery while Maintaining Trust | Stanford Medicine (5 min read) - Privacy governance is stifling Real-World Data research. The takeaway: Stop moving data to researchers. Use entirely artificial synthetic datasets as a “sandbox” for rapid pipeline development before executing final code securely on real patient records.

3 Things To Accelerate R&D Innovation Even If Your Infrastructure is Slow

In order to achieve high-velocity, human-centric drug development, you’re going to need a handful of things.

Let’s break down exactly how you can implement these strategic shifts this week.

1. Swap Consensus for the OVIS Framework

You need to eliminate the ambiguity of committee decision-making and assign clear, absolute decision rights to small teams.

Consensus management creates “Success Theater,” where critical data is smoothed over by middle management to protect the status quo. By the time a report reaches your desk, the weak signals you need to make agile decisions are gone. To move at the speed of AI, you must adopt the OVIS model. Assign one person who Owns the decision, two or three who can Veto or Influence it, and require everyone else to Support it. Give your cross-functional scrums (groups of 6 to 8 people) the explicit authority to act, not just recommend. Speed is your new ultimate defensibility.

2. Build the “Lego Factory” of NAMs

You need to stop relying on flawed animal models and embrace a modular, human-centric discovery pipeline.

With clinical trial failure rates exceeding 90%, it is overwhelmingly clear that animal models cannot reliably predict complex human outcomes. You need to combine computational models (AI) with biological realities (3D organoids and stem cells). Treat these New Approach Methodologies like interchangeable Lego blocks. Use AI to forecast toxicity and generative drug design computationally, and then validate those findings using human vascularized organoids. This effectively future-proofs your pipeline against late-stage clinical attrition while aligning perfectly with the FDA Modernization Act 2.0.

3. Treat Synthetic Data as Your Development Sandbox

You must move computation to the data, rather than trying to move sensitive patient data to your researchers.

Waiting for compliance and privacy approvals on Real-World Data kills your team’s momentum. Instead, give your researchers access to high-fidelity synthetic data—which carries zero re-identification risk because it contains entirely artificial records that mimic real statistical structures. Instruct your data scientists to define their cohorts, test their machine learning models, and refine their analytical pipelines entirely in this synthetic sandbox. Once the pipeline is perfected, you can securely run the finalized code in the “vault” of real-world patient data. This accelerates discovery while maintaining absolute patient trust.

PS...If you’re enjoying Digital Health & AI News, please consider referring this edition to a friend. You’ll get exclusive access to our quarterly AI integration benchmarking report for making a referral.

And whenever you are ready, there are 2 ways I can help you:

The AI-Augmented Leader Email Course: Sign-up for my free 5-day email course on how to become an AI Augmented Leader in Lifesciences.

Strategic Roadmap Design: Translate your priorities across different parts of the organization into a coordinated and clear roadmap in 2026. Book time on my calendar to discuss this further.

April 24 - HealthTech Dose

April 26, 2026

While AI can process data with zero errors, fully autonomous systems are currently failing patient retention in decentralized clinical trials. The discussion shifts from using AI for pure automation toward a model of human-AI augmentation. To protect the R&D pipeline and the P&L, leaders must prioritize three mandates:

Efficiency (eliminating the “workflow tax” on staff),

Trust (leveraging existing healthcare relationships), and

Nuance (matching AI autonomy to the emotional severity of the disease).

Key Takeaways:

The Cost of “Cold” Tech: Autonomous AI coaches may achieve equal clinical outcomes (e.g., weight loss) but suffer from significantly lower patient preference, leading to attrition that destroys statistical power.

The Workflow Tax: Poorly integrated third-party apps create a “tax” where clinical staff must stop patient care to perform manual data entry or technical troubleshooting for flawed autonomous systems.

EHR Integration over Standalone Apps: Success in recruitment (up to 20x faster) is found by embedding trial invitations directly into trusted environments like Epic’s MyChart rather than using unfamiliar, “alienating” third-party platforms.

Eliminating Manual Entry with eSource: Moving to a digital grid where clinical data flows directly from the EHR to the trial database reduces data entry error rates from 9% to zero.

The Scale of Autonomy: AI autonomy should be a sliding scale; it is highly effective for routine surveillance in chronic conditions (e.g., arthritis) but must remain a “human-in-the-loop” system for life-altering diagnoses like oncology or pediatric autism.

Show Notes:

[0:00 - 3:00] The Empathy Paradox: Leo and Sarah introduce the “perfect AI doctor” concept and the reality of how technical perfection can lead to a 20% patient dropout rate.

[3:00 - 5:30] Clinical Non-Inferiority vs. Patient Preference: A deep dive into the Dr. Nestor Mathioudakis diabetes trial. AI matched human outcomes in weight loss, but failed the “relational” test.

[5:30 - 8:00] The P&L Impact of Attrition: Why losing a patient in Phase III is a financial nightmare—re-recruitment costs tens of thousands of dollars and delays FDA submission.

[8:00 - 10:30] The “Penny” Case Study: Analyzing the Penny chatbot in chemo-therapy trials. How “algorithmic coldness” led to a 22% withdrawal rate when patients felt like a number during medical emergencies.

[10:30 - 13:00] UI/UX as an Emotional Crisis: Sarah argues that what looks like a lack of empathy is often a “workflow tax”—exhausted patients quitting trials because of login loops and app crashes.

[13:00 - 15:30] The Epic/EHR Solution: Moving away from “isolated skyscrapers” of data. How the Epic Discovery platform uses the “Cosmos” database (300M+ records) for native recruitment.

[15:30 - 18:00] Diversity and Trust: Why Yale New Haven saw a 30% increase in non-white participation by using MyChart invitations instead of cold pharmaceutical emails.

[18:00 - 20:00] The “Swivel Chair” End: How eSource technology eliminates the 9% error rate caused by coordinators manually typing data from one screen to another.

[20:00 - End] Strategic Summary: The shift from “AI-First” to “Human-First.” Why the R&D budget must reward human connection to keep the multi-million dollar technology pipeline alive.

Podcast generated with the help of NotebookLM

The 94% diagnostic AI ceiling we haven't hit

April 21, 2026

HT4LL-20260421

Hey there,

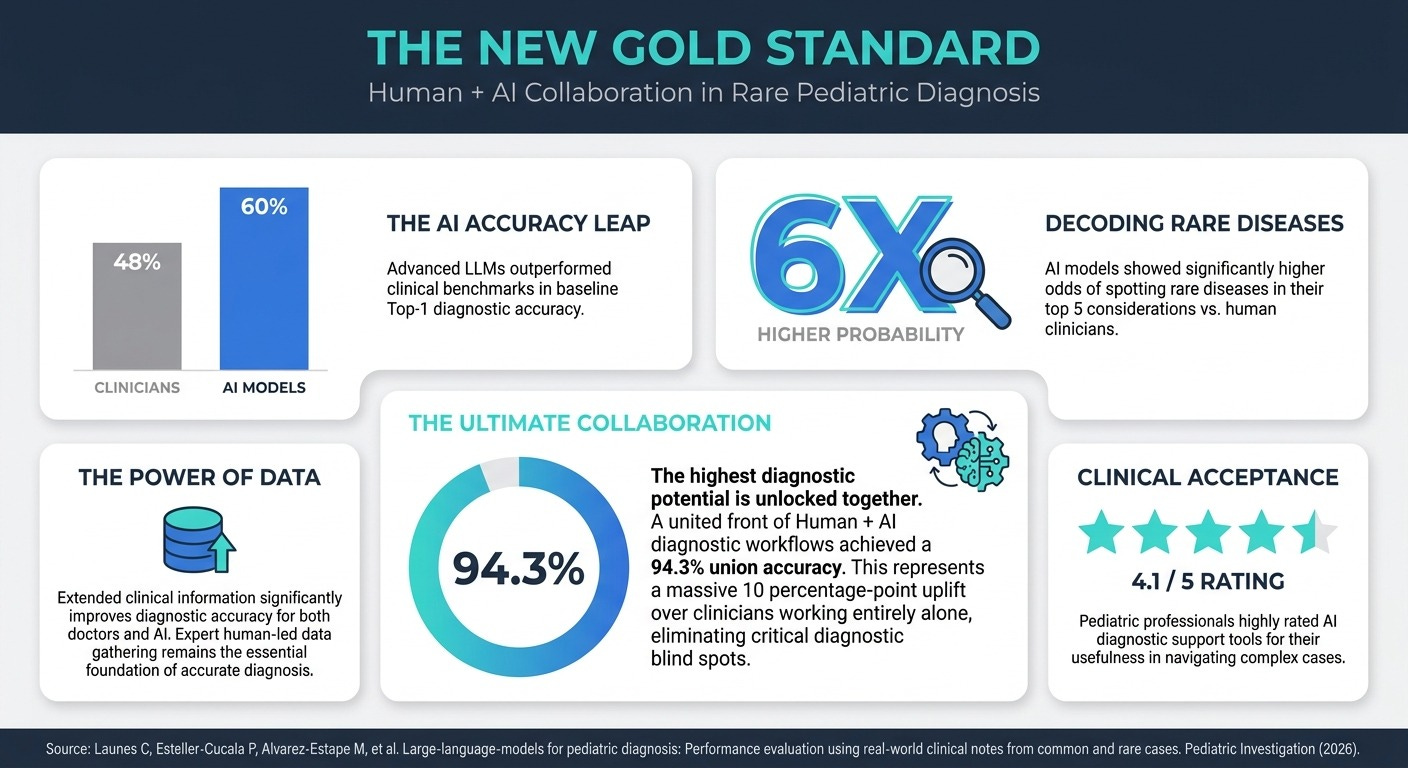

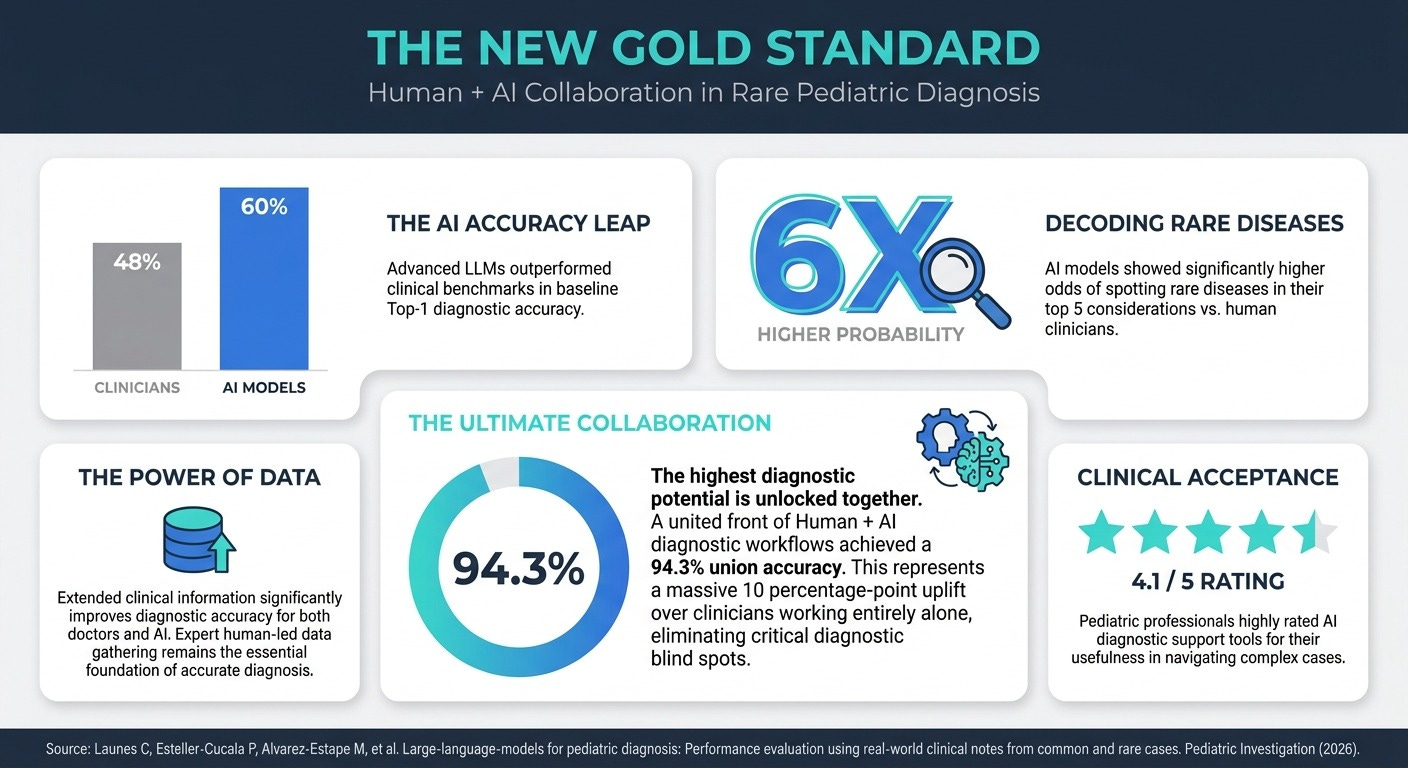

We are chasing an illusion if we believe raw artificial intelligence processing power alone will revolutionize medical R&D without fundamentally rewiring how humans verify and collaborate with these models.

As pharma R&D executives, you are under immense pressure to accelerate clinical pipelines while navigating an explosion of complex medical data. We are seeing headlines touting near-perfect AI diagnostic accuracies, but the reality on the ground is plagued by inconsistent algorithms, hidden citation hallucinations, and the severe psychological risk of automation bias. Furthermore, as we attempt to apply personalized ‘N of 1’ treatments to highly distinct diseases, our antiquated academic publication system is failing to validate these complex, dynamic regimens at the speed of clinical need. If we want to safely deploy AI and unlock true precision medicine, we must move beyond blind trust and rebuild our data pipelines for architectural transparency.

Today, we’re looking at the true theoretical ceiling of AI collaboration and the systemic changes required to reach it.

Why the 94.3% human-AI diagnostic accuracy is an untapped potential, not a scalable reality.

How architectural transparency combats the hidden risks of deep research agents.

Why scaling ‘N of 1’ precision oncology demands a new digital validation ecosystem.

If you’re looking to accelerate your R&D pipelines while ensuring rigorous clinical safety and overcoming the friction of AI integration, then here are the resources you need to dig into to build a resilient strategy:

Weekly Resource List:

Large-Language-Models for Pediatric Diagnosis (4 Min Read): Advanced AI models are proving to be incredible diagnostic safety nets, outperforming clinicians 60.0% to 48.2% in baseline accuracy for complex pediatric cases. However, the staggering 94.3% “union accuracy” achieved when humans and AI collaborated is an upper-bound proxy, representing a theoretical ceiling. Reaching it in practice requires overcoming the non-deterministic nature of AI and establishing workflows where clinicians perfectly recognize and accept correct AI-proposed diagnoses they initially missed.

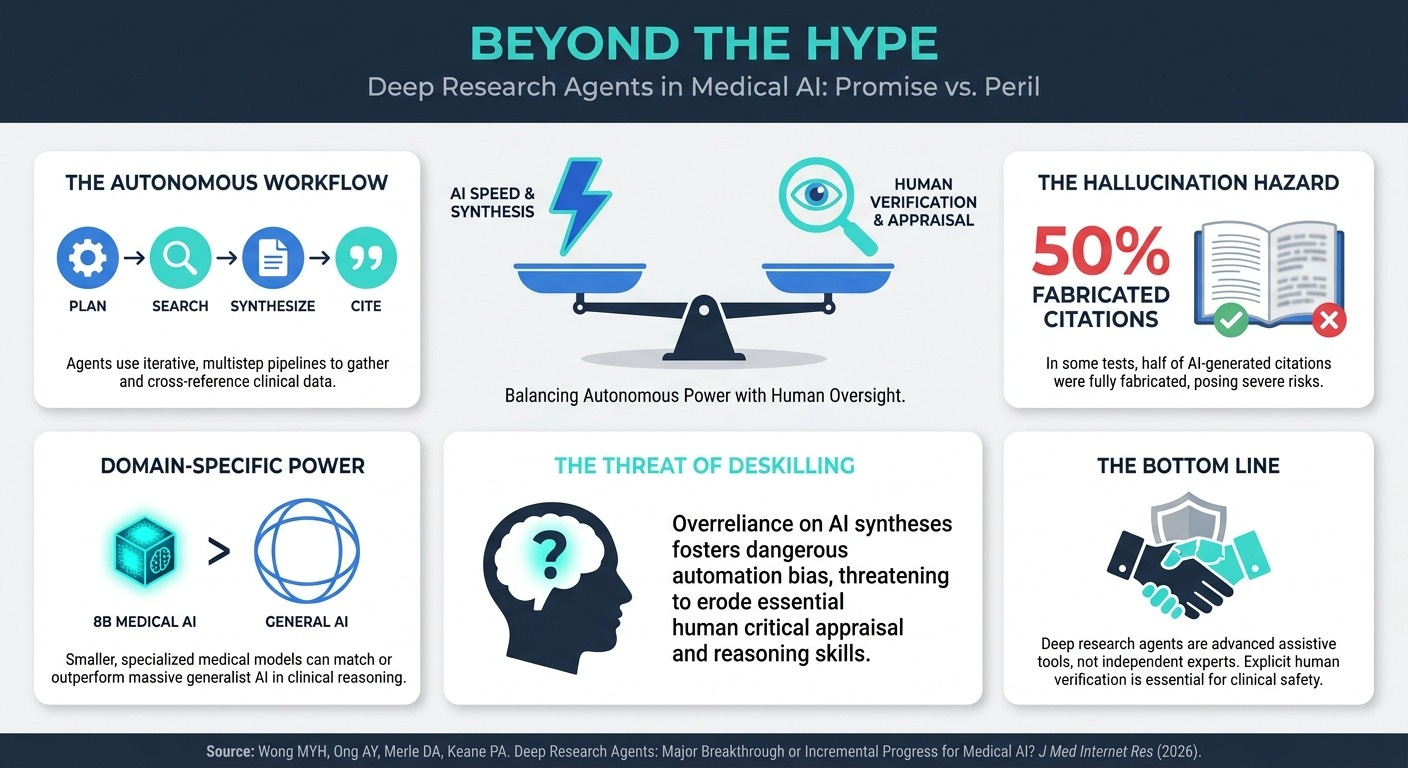

Deep Research Agents: Major Breakthrough or Incremental Progress? (4 Min Read): Autonomous AI agents can now synthesize vast amounts of medical literature in minutes, but they are not infallible experts. While they excel at structuring data, citation accuracy remains a massive hurdle, with some models fabricating up to 50% of their references. Uncritical use risks severe automation bias and deskilling, threatening to turn sharp clinical thinkers into passive consumers of opaque, algorithmically predigested data.

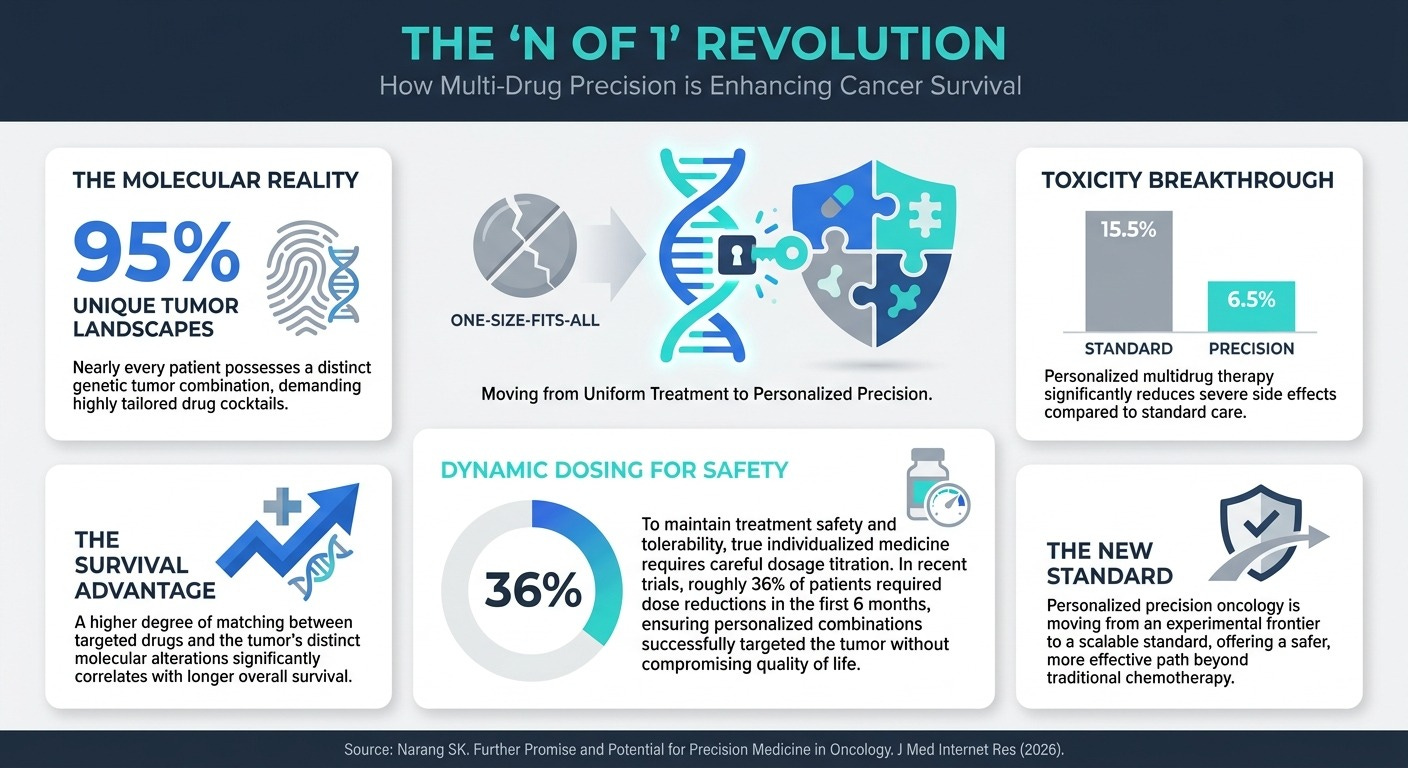

Further Promise and Potential for Precision Medicine in Oncology (3 Min Read): We must abandon the “one-size-fits-all” approach to cancer treatment. Advanced sequencing reveals that approximately 95% of patients possess uniquely distinct molecular landscapes in their tumors. By using highly tailored, personalized multidrug combinations—the “N of 1” approach—clinicians dramatically improved survival rates and reduced severe side effects to just 6.5%, though it requires intense, dynamic dose adjustments for safety.

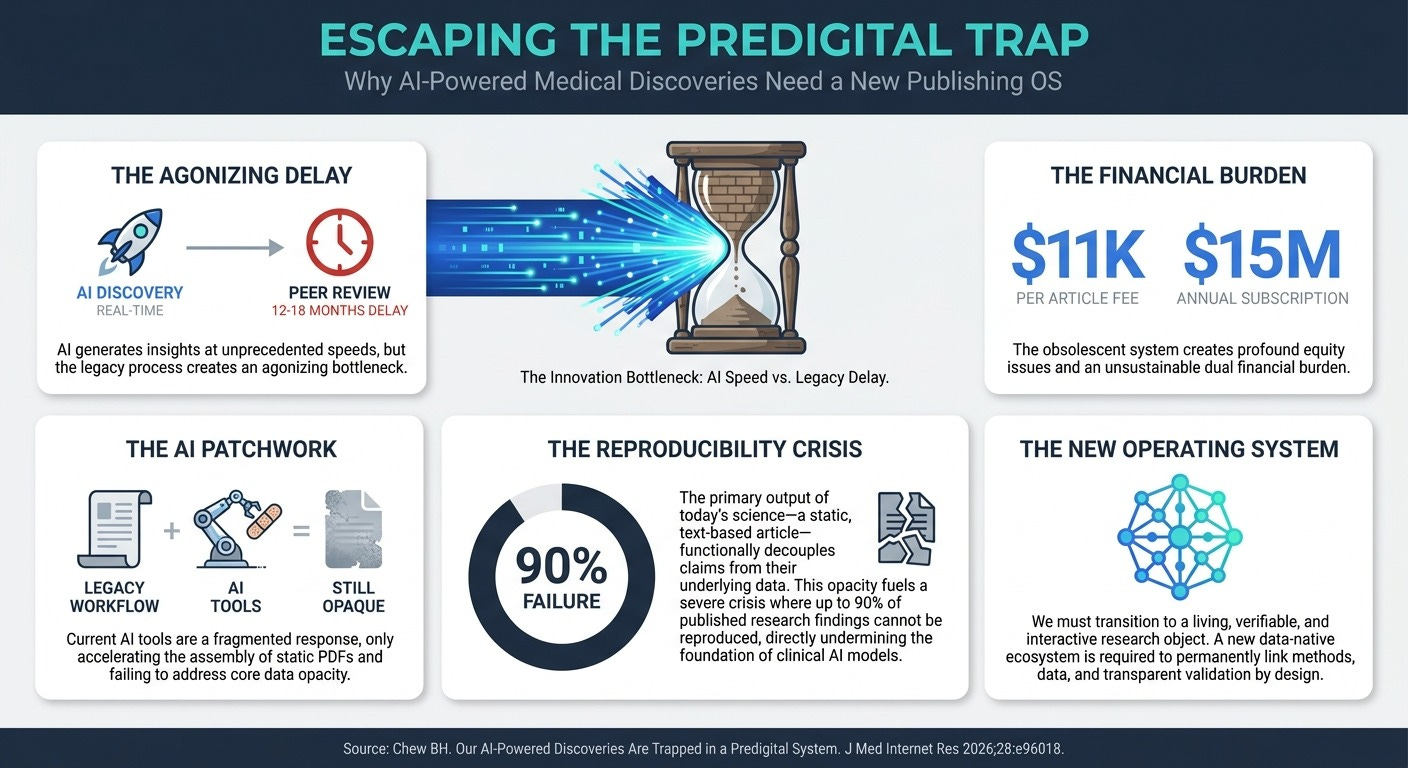

Our AI-Powered Discoveries Are Trapped in a Predigital System (3 Min Read): The current academic publishing model is a 17th-century bottleneck for 21st-century science. With 12- to 18-month publication delays and an estimated 50% to 90% of published research failing reproducibility tests, the static PDF format is a direct threat to data-driven medicine. To safely scale highly personalized treatments, we need a new digital ecosystem that permanently links data, methods, and transparent peer validation.

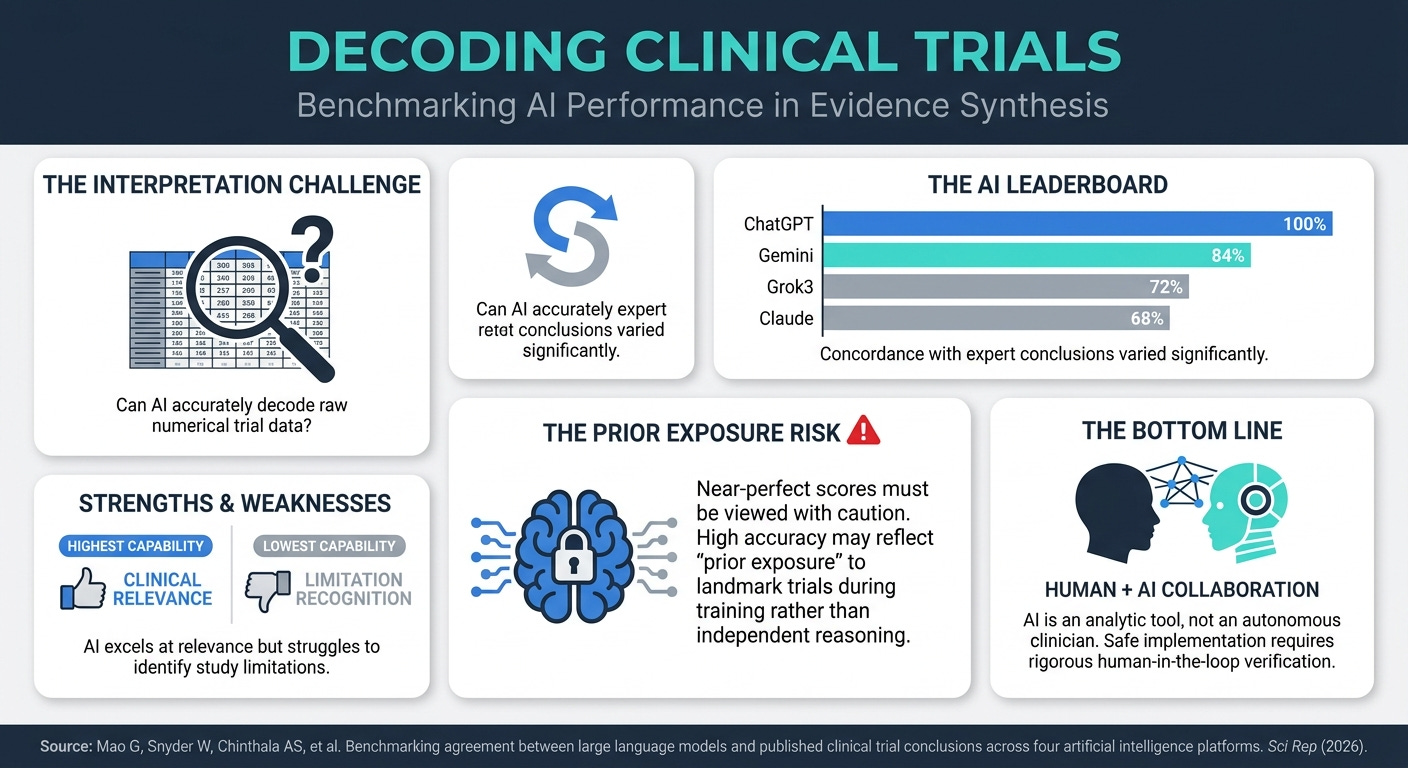

Benchmarking Agreement Between LLMs and Clinical Trial Conclusions (4 Min Read): While large language models show impressive capabilities in interpreting complex numerical clinical trial data—with ChatGPT achieving 100% and Gemini 84% concordance with expert conclusions—these results require a highly critical eye. This near-perfect accuracy is likely inflated by “prior exposure” to these famous trials during the models’ training phases. The key takeaway for R&D teams is that while AI is a powerful analytic instrument for data synthesis, its variable performance across platforms and the risk of training bias mean it cannot replace rigorous human-in-the-loop oversight.

3 Strategic Shifts To Scale Clinical AI With Confidence Even If The Data Is Complex

In order to successfully integrate AI into your clinical pipelines without compromising safety, you’re going to need a handful of strategic shifts.

Here is how you can build a resilient, AI-augmented workflow:

Demand Architectural Transparency and Selective Auditing: You cannot rely on blanket “human-in-the-loop” verification to catch errors in an opaque black box. Because deep research agents can generate highly polished outputs with subtle misrepresentations or fabricated citations, forcing your team to manually audit every claim risks severe automation bias and completely erases efficiency gains. Instead, demand AI systems that provide transparent retrieval architectures—exposing exact citation weights and direct source links. This allows your experts to conduct selective, high-leverage audits of the most clinically actionable statements against authoritative repositories without having to recreate the research from scratch.

Pivot from ‘One-Size-Fits-All’ to ‘N of 1’ Strategies: Broad-spectrum standardization is no longer the gold standard in therapeutics. Because 95% of tumors have unique molecular profiles, you need to prioritize dynamic, tailored multidrug therapies. To maximize efficacy and minimize toxicity, you must embrace advanced genomic sequencing and highly flexible dosing models early in your R&D process. However, effectively scaling these hyper-personalized, often off-label cocktails requires overcoming massive data-validation friction, which leads to our final shift.

Champion Dynamic Data Ecosystems to Validate Precision Medicine: The transition to ‘N of 1’ medicine cannot happen using legacy 12- to 18-month peer-review cycles. Standard academic publishing is fundamentally incapable of safely validating the real-time, dynamic dosage adjustments required across thousands of unique, multidrug patient profiles. To make precision medicine scalable and trustworthy, you must advocate for a new “operating system” for scientific evidence 6. We need living, interactive, and verifiable research objects where underlying data, methods, and transparent peer validation are permanently linked by design.

PS...If you're enjoying Healthtech for Lifescience Leaders, please consider referring this edition to a friend.

And whenever you are ready, there are 2 ways I can help you:

The AI-Augmented Leader Email Course: Sign-up for my free 5-day email course on how to become an AI Augmented Leader in Lifesciences.

Strategic Roadmap Design: Translate your priorities across different parts of the organization into a coordinated and clear roadmap in 2026. Book time on my calendar to discuss this further.

April 17 - HealthTech Dose

April 18, 2026

This episode moves beyond the hype of generative AI to address the stark reality of modern pharmaceutical R&D: we have 21st-century discovery engines slamming into 17th-century validation systems. The mission of this deep dive is to reconcile the incredible speed of AI-driven molecule design with the analog friction of clinical trials and academic publishing. To survive this transition, industry leaders must move past “tech-utopian” thinking and solve the structural “dirt roads” that prevent life-saving data from becoming physical cures. The key strategic win lies in balancing the “supersonic” speed of AI with the non-negotiable “safety brakes” of human clinical expertise.

Key Takeaways:

Acknowledge the Systemic Paradox: While AI can now design a molecule in 12 months, the human validation process still takes a decade, creating a massive “rocket fuel in a horse-drawn carriage” efficiency gap.

Guard Against “Auto-Pilot” Bias: Excessive reliance on AI summaries risks “de-skilling” clinicians, who may lose the cognitive muscle required to spot flawed methodologies or fabricated data.

Address the “Black Box” of Evidence: Current AI models often suffer from “paywall blindness,” prioritizing free, low-quality data over rigorous, subscription-locked scientific journals, leading to skewed results.

Eliminate “Swivel Chair” Inefficiency: Move toward a “Clinical Development Flywheel” by integrating research directly into Electronic Health Records (EHR) to automate data flow and solve chronic patient recruitment failures.

Pivot to “Algorithmic Trust”: As 75% of younger clinicians turn to AI for information, pharmaceutical companies must shift their marketing from human “lunch and learns” to ensuring their raw data is discoverable and trusted by medical AI models.

Show Notes:

[0:00 - 1:30] Introduction to the “Systemic Bottleneck”: How shiny 21st-century discovery engines are hitting a wall of 17th-century operational systems.

[1:30 - 3:00] The Front-End Revolution: AI is collapsing drug discovery from years to months by simulating billions of molecular bindings before a test tube is even touched.

[3:00 - 5:00] The Validation Wall: Why the academic publishing and peer-review process remains a “dirt road” for AI’s “supersonic jet”.

[5:00 - 7:00] The Danger of De-skilling: The “Pilot on Autopilot” analogy—why clinicians must maintain the expertise to audit AI or risk missing subtle, life-threatening errors.

[7:00 - 9:30] Hallucinations in Action: A look at the JMIR study where AI fabricated entire clinical trials with realistic (but fake) p-values and patient cohorts.

[9:30 - 12:00] Operational Agility: Bypassing the IND mountain through Investigator-Initiated Trials (IITs) to get real human pharmacokinetic data faster.

[12:00 - 14:30] The Clinical Flywheel: Leveraging Epic’s “Cosmos” database to capture patient intent at the point of care and eliminate manual “swivel chair” data entry.

[14:30 - 17:00] The Commercial Shift: Why 54% of healthcare professionals are using GenAI for info, rendering the traditional “Pharma Rep” model obsolete.

[17:00 - End] The Future of Regulation: As AI stops hallucinating, will human regulators even be capable of auditing the complex math behind the next generation of drugs?

Podcast generated with the help of NotebookLM.

Sources:

Our AI-Powered Discoveries Are Trapped in a Predigital System

The evolution of pharma engagement as AI becomes a front door to medical information

China IIT vs. China IND: When an Investigator Initiated Trial can accelerate early development

The Next Frontier Of Drug Safety Innovation: AI-Supported Signal Management

Australia Forms National Committee to Oversee AI and Virtual Care Safety

April 3 - HealthTech Dose

April 3, 2026

The mission is to shift the focus from sterile lab successes toward full-scale operational integration across psychiatry and oncology. To succeed in this decade, healthcare leaders must prioritize three immediate strategic mandates: Speed (rapidly matching patients to the right treatments), Assurance (meeting safety requirements through rigorous real-world validation), and Differentiation (using AI to simulate complex surgical and biological scenarios). The key strategic win lies in embracing AI not as pure automation, but as a powerful tool for augmenting human expertise—ensuring that while algorithms suggest, doctors still decide.

Key Takeaways:

Accelerate treatment matching by utilizing tools like the Petrushka trial’s AI, which mathematically combines clinical data with personal patient preferences to reduce antidepressant discontinuation rates by 40%.

Ensure regulatory assurance by looking past “wind tunnel” simulations and demanding ecological validity, proving that AI models can handle the unpredictable, messy reality of human patients in crisis.

Achieve clinical differentiation through transcriptomic data integration, allowing AI to analyze active gene expressions in tumors to personalize curative treatments for head and neck cancers at a level humans cannot manually calculate.

Unlock surgical bottlenecks by deploying digital twins, which ingest MRI and CT data to run tens of thousands of “what-if” simulations, optimizing scalpel angles and predicting hemorrhages before a patient enters the operating room.

Prioritize human-in-the-loop strategies to address the shift in liability, recognizing that while AI can find anomalies, human experts must remain the final sign-off to navigate the ethical “black box” of algorithmic decision-making.

Show Notes:

[0:00 - 2:30] The AI Collision: Introduction to the “thrilling and terrifying” collision of AI and healthcare evidence, focusing on the need for execution over hype.

[2:30 - 5:15] Speed in Psychiatry: How the Petrushka trial uses AI to move past “trial and error” prescribing by cross-referencing clinical outcomes with patient boundaries like weight gain or insomnia.

[5:15 - 8:00] The “Ecological Validity” Gap: A critique of studies where AI is only tested on “researcher personas” rather than real-world patients using slang or experiencing acute crises.

[8:00 - 10:45] Assurance in Oncology: Analyzing the SuperTreat project and its use of transcriptomic data to see which genes are “active” in a tumor at a specific moment.

[10:45 - 13:30] The 14.5% Hallucination Hurdle: Discussing the systemic danger of AI fabricating responses in complex clinical trial documents and the risk of oncology patients making life-or-death decisions on false data.

[13:30 - 15:45] Self-Evaluating AI: The controversy of using GPT-4o to grade other models (the “Michelin Star Chef” analogy) and the inherent risk of vendor bias.

[15:45 - 18:15] Differentiation through Digital Twins: How MD Anderson uses AI to practice surgeries in silico to reduce physical risk and optimize outcomes before a doctor ever scrubs in.

[18:15 - End] The Liability Tightrope: Why human doctors currently bear 100% of the legal risk for algorithmic errors and the potential rise of the AI malpractice insurance industry.

Podcast generated with the help of NotebookLM.

Stop building AI on a broken foundation

April 2, 2026

HT4LL-20260331

Hey there,

We are obsessing over advanced AI models while ignoring the crumbling digital architecture required to actually deploy them.

You are constantly pitched on the magic of “Digital Twins” that simulate clinical trials and autonomous “AI Agents” that promise to revolutionize patient care. Yet, when you try to integrate these tools into your pipeline, you hit a wall of regulatory ambiguity and fragmented data systems. We are building sophisticated algorithms that learn continuously, but our regulatory frameworks (like the EU MDR) are stuck in the era of static software. If we don’t standardize our data architecture and establish clear rules for synthetic evidence, these brilliant models will never survive real-world clinical deployment.

Today, we are looking at the cutting edge of simulated medicine and the structural guardrails needed to make it a reality.

How “Subpopulation” Digital Twins can predict if a clinical trial will fail.

The hidden regulatory gaps in deploying continuous-learning AI.

Why autonomous AI agents require standardized API infrastructure.

If you’re trying to move your digital strategy from “sandbox simulations” to “regulatory-grade deployments,” then here are the resources you need to dig into to secure that competitive advantage:

Weekly Resource List:

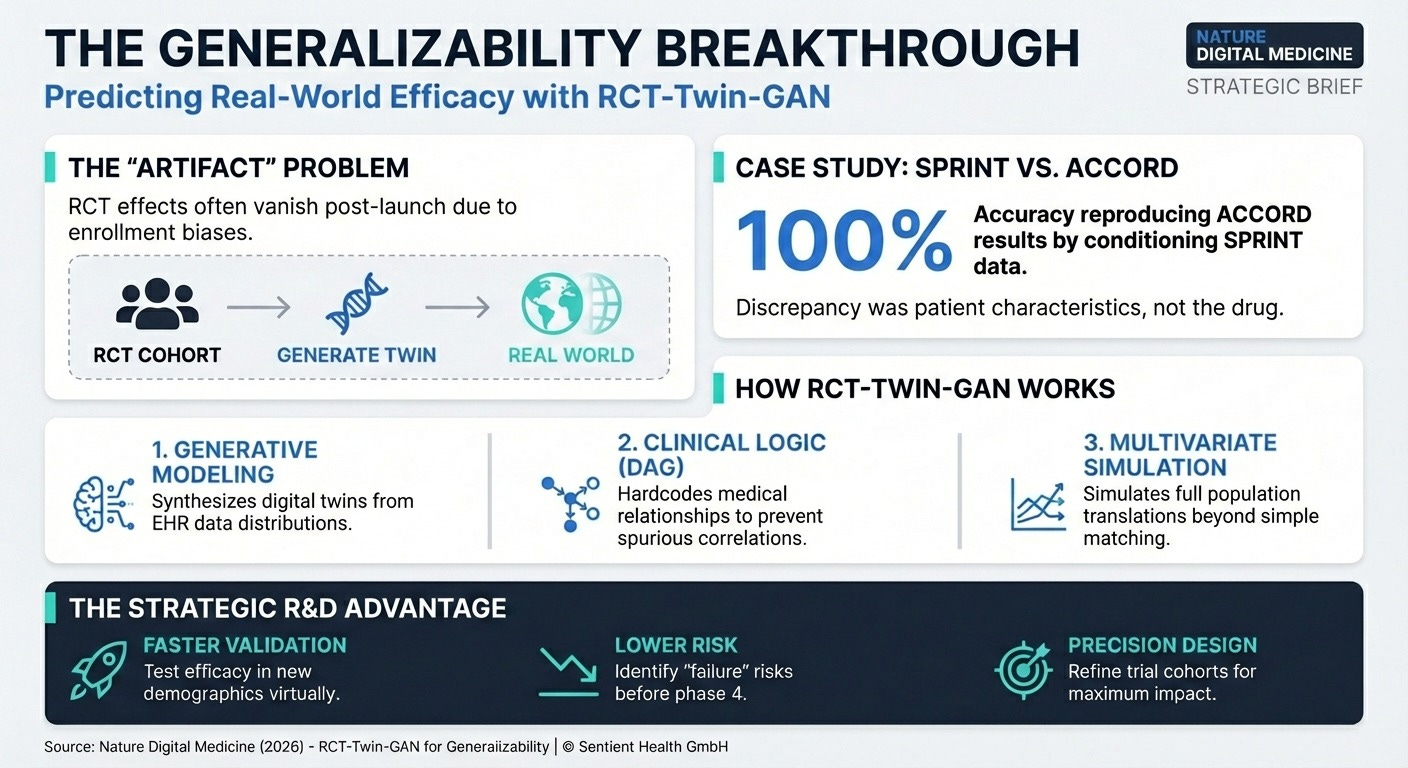

Predicting Trial Success with Digital Twins (15 min read) Researchers developed RCT-Twin-GAN to simulate how a clinical trial’s treatment effect translates to a completely different patient population.

The Takeaway: By creating synthetic digital twins of patient cohorts, you can quantitatively evaluate if an intervention that worked in a highly controlled trial will actually succeed in a real-world, diverse population before spending millions on a new study.

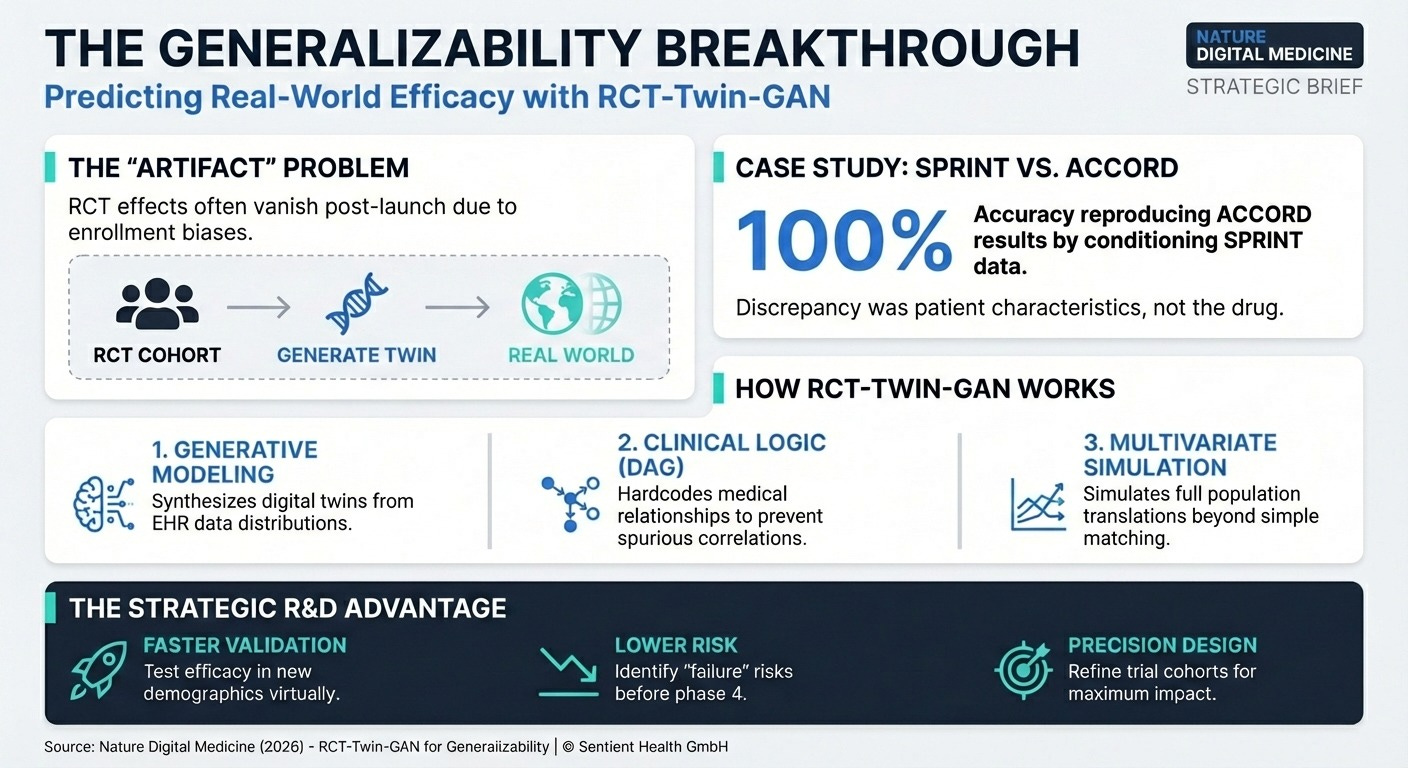

Digital Twins for Adaptive Interventions (15 min read) A new framework uses “digital twins of a subpopulation” to simulate and optimize Just-In-Time Adaptive Interventions (JITAIs).

The Takeaway: Instead of running costly A/B tests for every minor tweak to a digital health app, you can use subpopulation digital twins to simulate how patients will react. This allows you to optimize engagement algorithms offline between deployments.

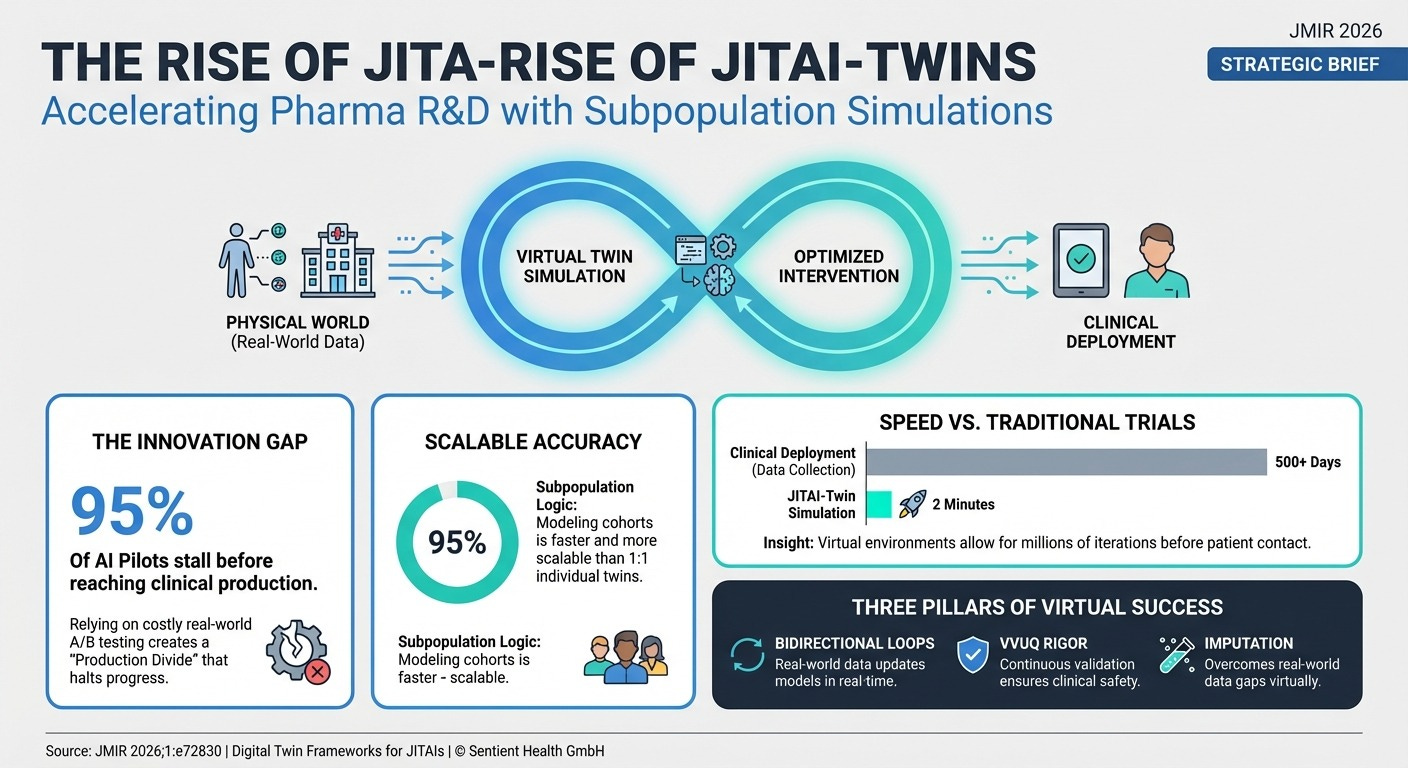

Data-Driven Devices vs. The EU MDR (12 min read) A critical mapping of European regulatory requirements against modern AI standards.

The Takeaway: Current regulations are built for static devices. They lack clear guidance for “adaptive AI” (models that learn continuously) and the use of synthetic data for evidence generation. You must build your own continuous validation protocols because the regulations haven’t caught up.

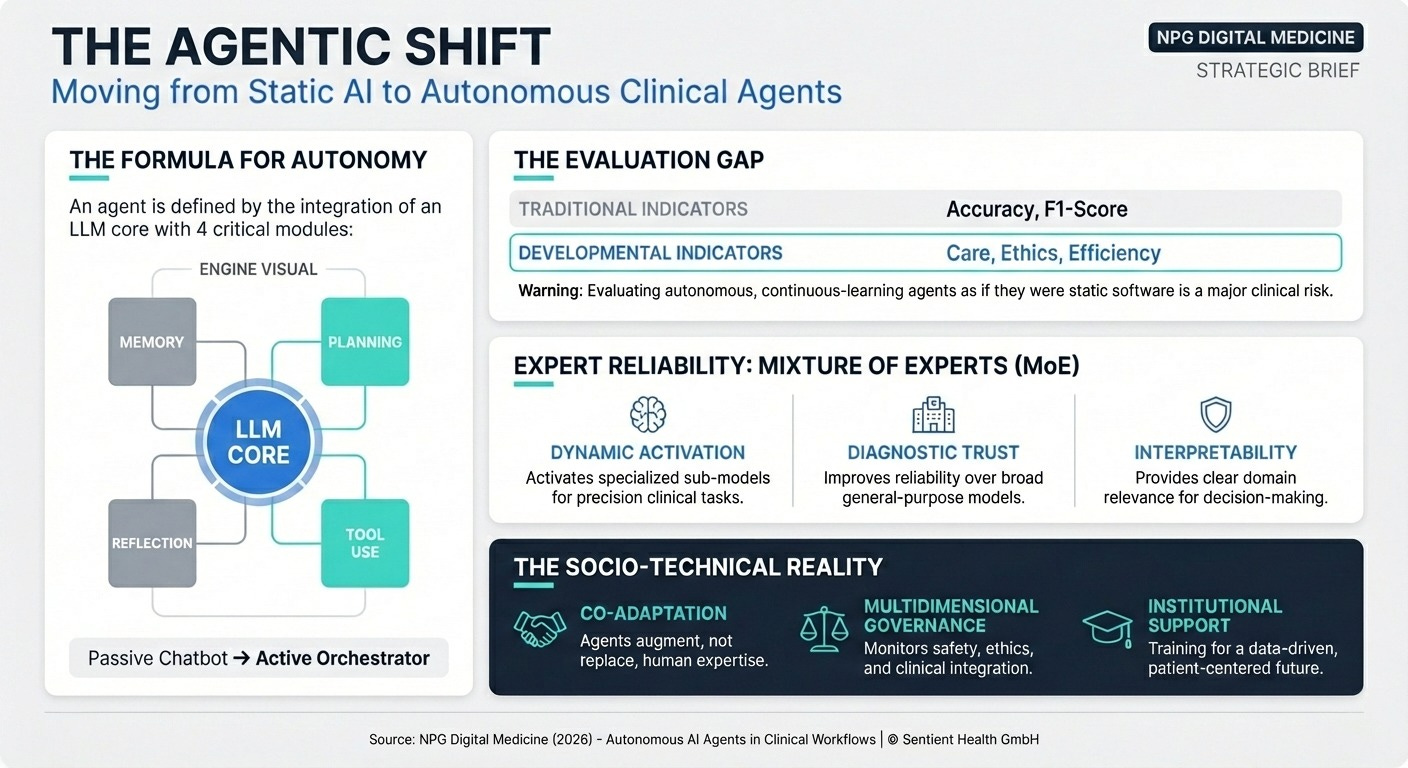

AI Agents in Healthcare (20 min read) A comprehensive review of autonomous LLM-powered agents in medicine.

The Takeaway: AI agents can now reason and use external tools to assist in diagnosis and hospital management. However, relying on standard metrics like “accuracy” is no longer enough; we must evaluate agents on “humanistic care,” ethical compliance, and sociotechnical workflow integration to ensure clinical safety.

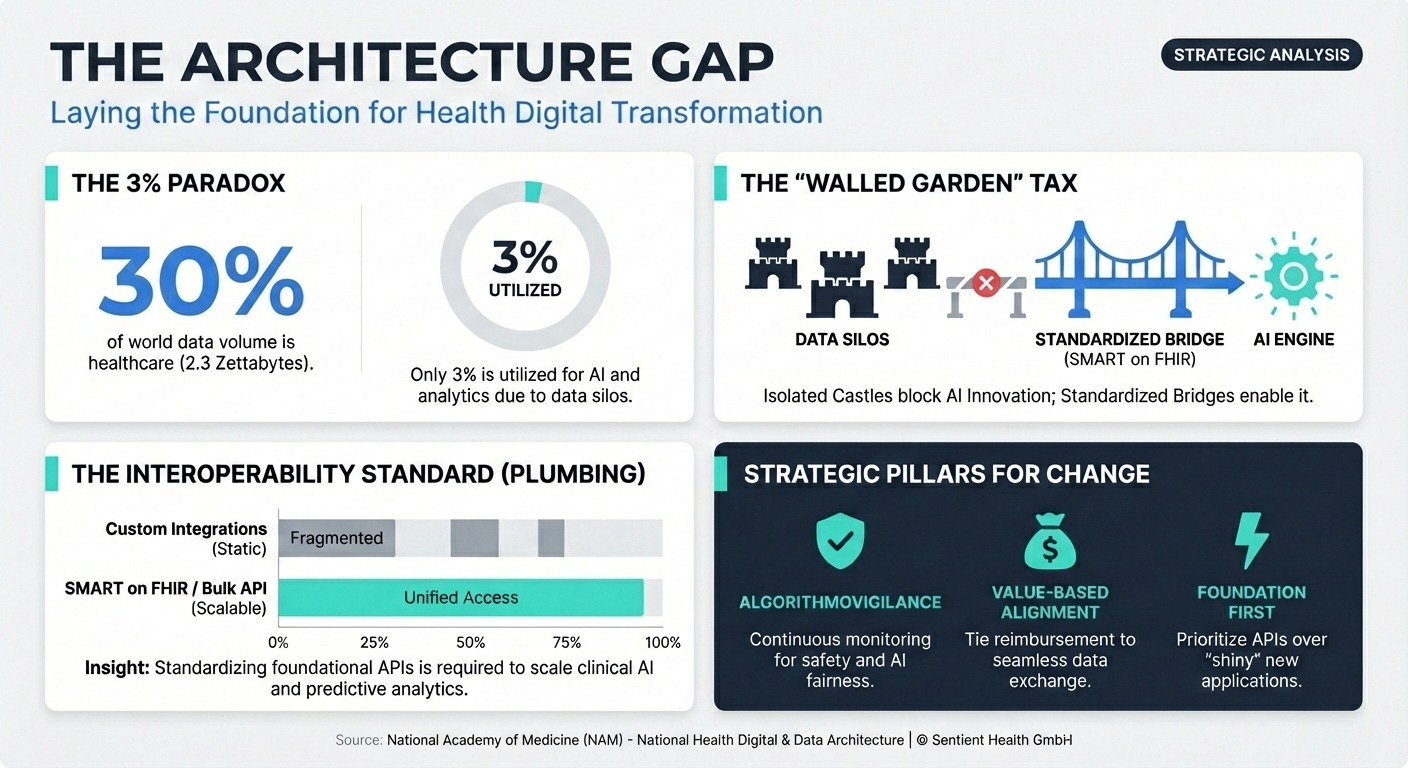

Laying the Foundation for Digital Transformation (18 min read) The National Academy of Medicine argues health care is failing at digital transformation because it lacks a unified architecture.

The Takeaway: Unlike telecommunications or banking, healthcare relies on customized, one-off IT integrations. To scale AI, we must prioritize foundational interoperability standards (like SMART on FHIR) over proprietary walled gardens.

3 Ways To Scale Data-Driven AI With Confidence Even If Regulations Are Lagging

In order to achieve a scalable, future-proof AI pipeline, you’re going to need a handful of things: subpopulation simulations, algorithm vigilance, and foundational interoperability.

Let’s break down how to apply this week’s learnings to your strategy.

1. Pivot to “Subpopulation” Twins

Stop trying to build 1:1 digital replicas of individual patients; it is unscalable and leads to data-collection bottlenecks. Instead, deploy “subpopulation twins” using generative models.

The breakthrough highlighted in both the JITAI and RCT-Twin-GAN papers is the shift to modeling a subpopulation. By sampling covariate distributions from a second population (like applying the SPRINT trial data to the ACCORD trial population), researchers can quantitatively prove if a treatment effect will generalize to a new real-world demographic without needing precise 1:1 patient matching. This allows you to simulate how your Phase III trial will perform in a completely new demographic, solving the RCT generalizability crisis before you enroll a single real-world patient. Before launching a costly expansion trial or a major update to a digital therapeutic, require your data science team to run an offline simulation using a multivariate subpopulation digital twin to identify potential failure points and optimize the treatment effect.

2. Establish “Algorithmovigilance” Programs

You cannot rely on static EU MDR compliance for adaptive AI. You must build an internal “algorithmovigilance” infrastructure that continuously tracks performance drift.

The EU MDR gap analysis and the National Academy of Medicine (NAM) papers provide highly specific warnings: current regulations are built for “static” software and completely miss the risks of continuous-learning adaptive algorithms, algorithmic bias, and performance drift. If your AI model experiences performance drift due to changing patient demographics or edge cases, standard compliance checks won’t catch it until it’s too late. The NAM paper explicitly calls for the establishment of “algorithmovigilance” programs to continuously monitor these tools. Do not rely on baseline regulatory compliance as your safety net. Implement real-time, post-market surveillance specifically tailored to monitor algorithmic drift, adversarial attacks, and edge-case failures. An algorithm that is safe and equitable on day one can become dangerously biased by day 100 if you aren’t watching.

3. Standardize API Infrastructure Before Deploying Agents

Do not deploy autonomous AI agents into a fragmented IT environment. Architecture must precede autonomy.

The NAM paper explicitly states that the core issue hindering digital transformation is not technological, but architectural. Health systems rely on customized, one-off IT integrations, which act as “walled gardens”. AI agents—which are defined by their ability to seamlessly use tools and orchestrate tasks across different systems—will fundamentally break if they are deployed into fragmented, siloed data environments. You cannot solve a plumbing problem with a smarter water filter. Before investing in agentic AI to streamline your clinical operations or patient engagement, you must prioritize a foundational interoperability layer. Specifically, adopt SMART on FHIR for individual access and Bulk FHIR for population-level exchange. If your agents cannot read the data, they cannot do the work.

PS...If you're enjoying Healthtech for Lifescience Leaders, please consider referring this edition to a friend.

And whenever you are ready, there are 2 ways I can help you:

The AI-Augmented Leader Email Course: Sign-up for my free 5-day email course on how to become an AI Augmented Leader in Lifesciences.

Strategic Roadmap Design: Translate your priorities across different parts of the organization into a coordinated and clear roadmap in 2026. Book time on my calendar to discuss this further.

March 27 - HealthTech Dose

March 27, 2026

To succeed this decade, executives must prioritize three immediate strategic mandates: Speed (rapid acceleration in clinical execution via simulation), Assurance (meeting regulatory requirements through rigorous data quality like eCOA), and Differentiation (using AI to prove real-world transportability). The key strategic win lies in embracing AI not as pure automation, but as a powerful tool for augmenting human expertise across scientific inquiry and clinical decision-making, specifically through the use of “silicon wind tunnels” to stress-test trial designs.

Key Takeaways:

Eliminate “Parking Lot” Data: Sponsors must move from paper diaries—which suffer from “Parking Lot Syndrome” where patients fabricate weeks of data—to electronic Clinical Outcome Assessments (eCOA) to ensure data is attributable, legible, and accurate.

Build Silicon Wind Tunnels: Shift from using AI as a trial replacement to “Target Trial Emulation,” using digital twins to stress-test inclusion criteria and protocol design before activating expensive physical sites.

Unlock Unstructured Clinical Notes: Leverage Large Language Models (LLMs) behind health system firewalls to process messy doctor’s notes, achieving up to 96% accuracy in determining patient eligibility.

Democratize AI for Mid-Sized Pharma: Use transfer learning frameworks like TransTab to adapt large foundation models to specific trials, bypassing the massive compute costs that usually favor “MegaCap” pharma.

Navigate the Regulatory Trust Gap: While AI moves exponentially, regulatory frameworks like the EU MDR are still catching up; sponsors must focus on proving “transportability”—how trial results translate to the messy real world.

Show Notes:

[0:00 - 3:00] The Shattered X-Ray of R&D: Why modern pharma R&D has moved from the binary certainty of an X-ray to a murky, fractured landscape that requires AI for clarity.

[3:00 - 5:30] The Data Integrity Bottleneck: Why training digital twins on fractured Electronic Health Records (EHR) creates a “mirror of broken record keeping” rather than a simulation of reality.

[5:30 - 8:00] The GANs Revolution: How Generative Adversarial Networks—acting as “forger” and “detective”—use high-quality historic RCT data to build biologically plausible patient trajectories.

[8:00 - 10:30] The Cleveland Clinic Breakthrough: Deep dive into the LLM architecture that processes unstructured doctor’s notes behind the firewall to solve trial recruitment bottlenecks.

[10:30 - 13:00] Moving Beyond Paper: A look at ALCOA principles and why regulatory-grade evidence is impossible without shifting from paper diaries to eCOA.

[13:00 - 15:30] Silicon Wind Tunnels: Exploring “Target Trial Emulation” as a decision support tool to find flaws in trial design before spending millions on physical sites.

[15:30 - 18:30] The P&L of Innovation: How mid-sized sponsors can afford advanced AI through transfer learning and frameworks like TransTab that “read” mismatched data tables.

[18:30 - 21:00] Strategic Partnerships: Navigating the symbiotic relationship between tech giants providing compute grants and pharma providing clinical validation.

[21:00 - End] Proving Transportability: The final strategic mandate: using digital twins to prove to regulators that a drug will work in a diverse, real-world population.

Podcast generated with the help of NotebookLM

Stop automating your clinical blind spots

March 25, 2026

HT4LL-20260324

Hey there,

Are we blindly deploying algorithms that perfectly replicate our own clinical blind spots?

You are under immense pressure to deploy AI to speed up recruitment, streamline triage, and analyze wearable data. But every time you roll out a new model, you hit a wall of operational anxiety. Will the generative AI hallucinate a diagnosis? Will the wearable data actually translate to better at-home patient care, or just drown us in noise and app fatigue? We are so obsessed with the speed of AI that we are ignoring the sociotechnical safeguards required to make it trustworthy and equitable in the real world.

Today, we are looking at the hard guardrails needed to build AI that actually works in the messy real world.

How AI solved a rare disease trial’s diversity problem in one week.

Why “sociotechnical governance” and UI warnings cut human-AI errors.

The dangerous gap between passive wearable data and human workflow integration.

If you’re trying to move your digital strategy from “hype-driven pilots” to equitable, regulatory-grade execution, then here are the resources you need to dig into to secure that competitive advantage:

Weekly Resource List:

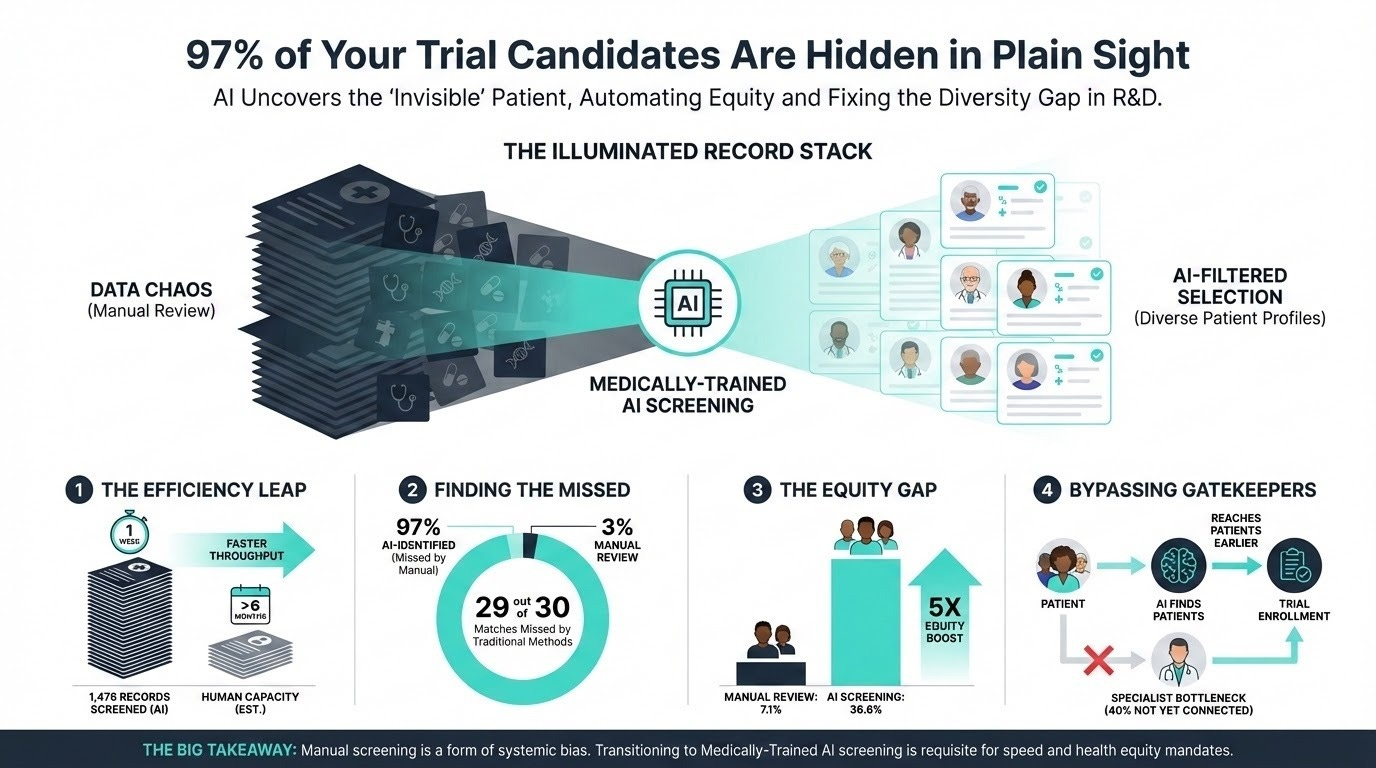

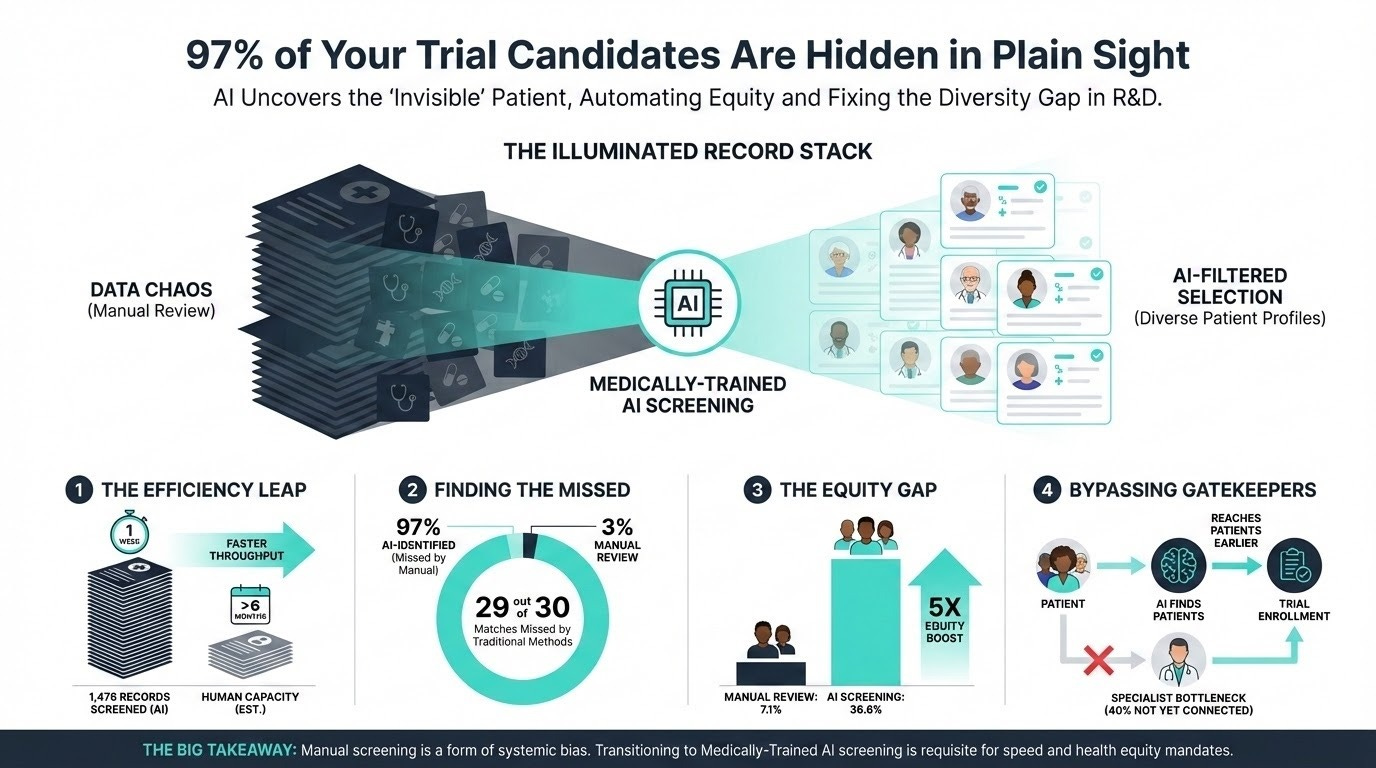

Automating Rare Disease Trial Enrollment (10 min read): Cleveland Clinic used a medically trained large language model to screen 1,476 electronic medical records for an ATTR-CM trial.

The Takeaway: It achieved 96.2% accuracy, but the real win was equity. The AI identified 36.6% Black patients versus just 7.1% from routine manual screening, proving AI can shatter recruitment bottlenecks and systemic biases simultaneously.

Catching Gen AI’s Bad Advice (12 min read): Harvard Business School research on human-AI collaboration and forecasting.

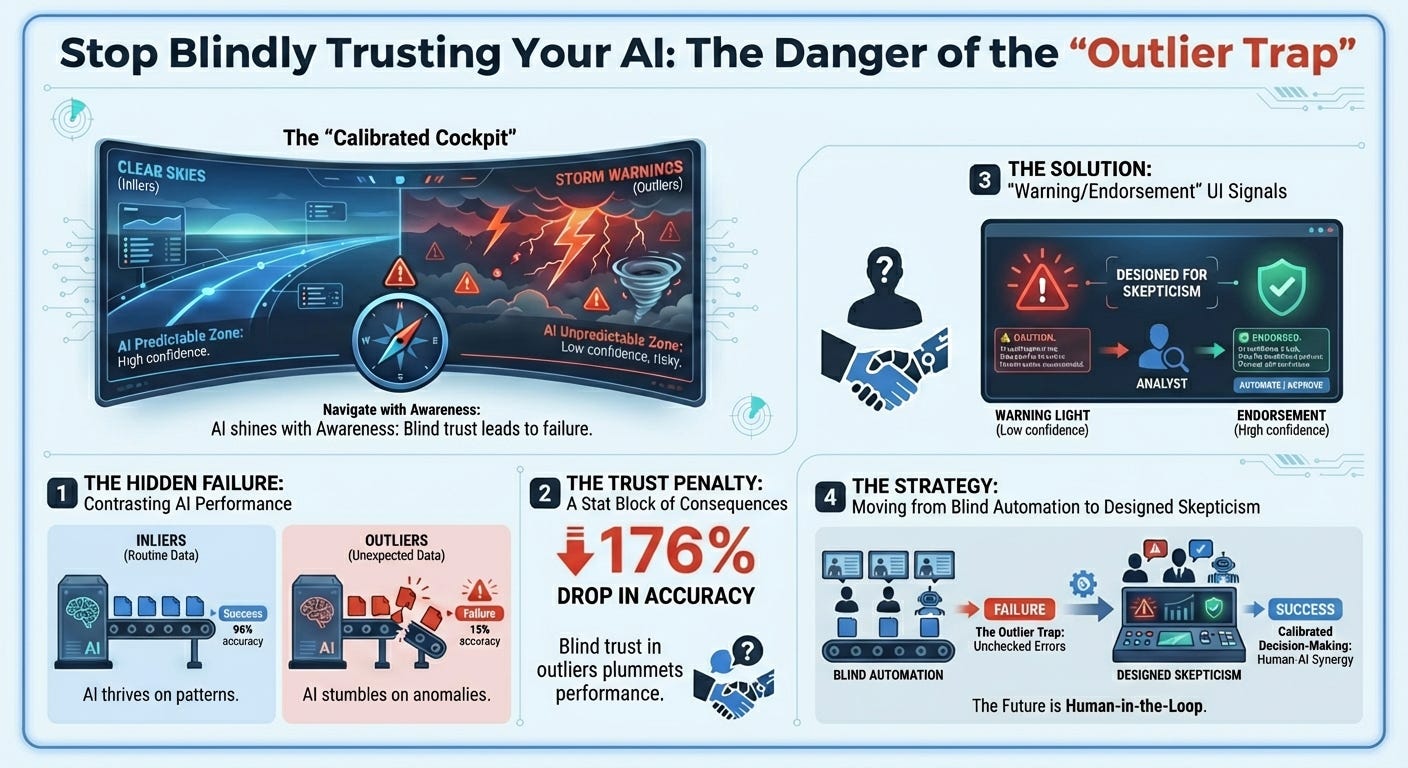

The Takeaway: Humans blindly trust confident algorithms, suffering from “naive adjusting behavior” when evaluating outlier data the AI isn’t trained on. Adding simple UI alerts—warnings for unusual data and endorsements for familiar data—cut user errors by nearly half (49%). Calibrated trust is better than blind trust.

AI Wearables in Parkinson’s Disease (15 min read): A scoping review of 66 studies on AI-enabled wearables.

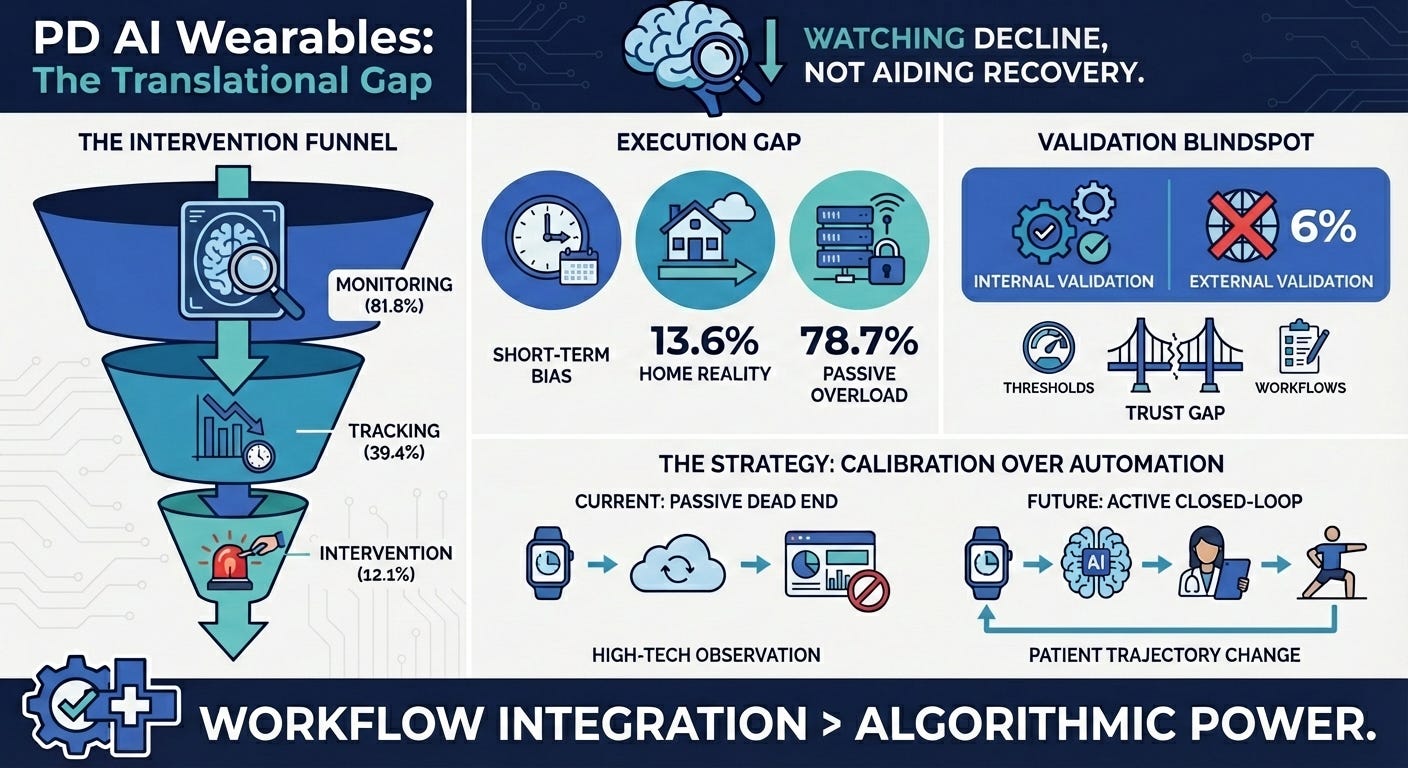

The Takeaway: The industry is stuck in the “assessment” trap. Most wearables passively monitor patients in clinical settings, but rarely translate into closed-loop, at-home rehabilitation interventions. We need to move from generating passive data to generating active clinical feedback.

Digital Interventions for Adolescent Activity (15 min read): A systematic review of digital health interventions for youth.

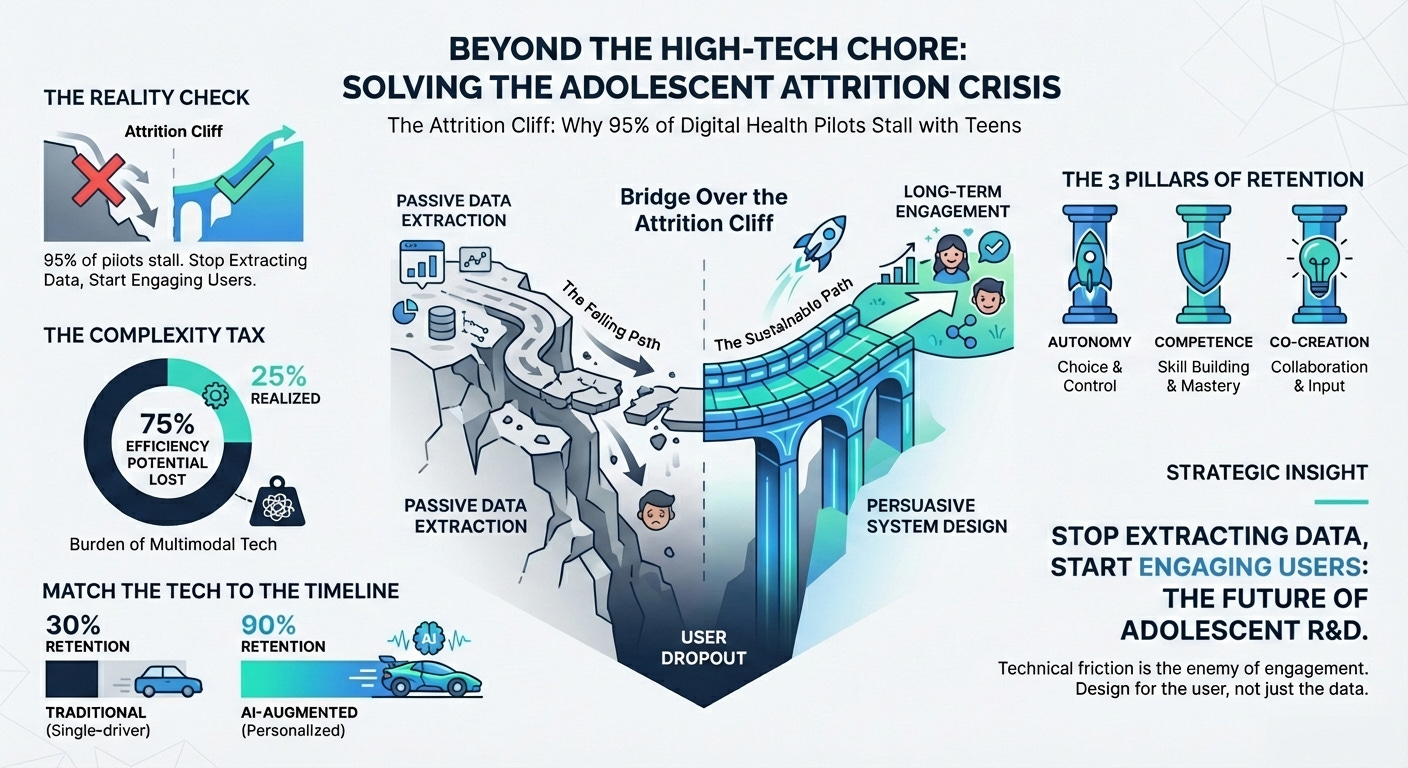

The Takeaway: While multimodal apps improve activity, attrition is a massive challenge due to “tech dependency” and lack of personalization. If your digital endpoint creates too much technical friction for the patient, you will lose your longitudinal data.

AI Triage and Real-World Equity (12 min read): A critical look at primary care AI triage systems.

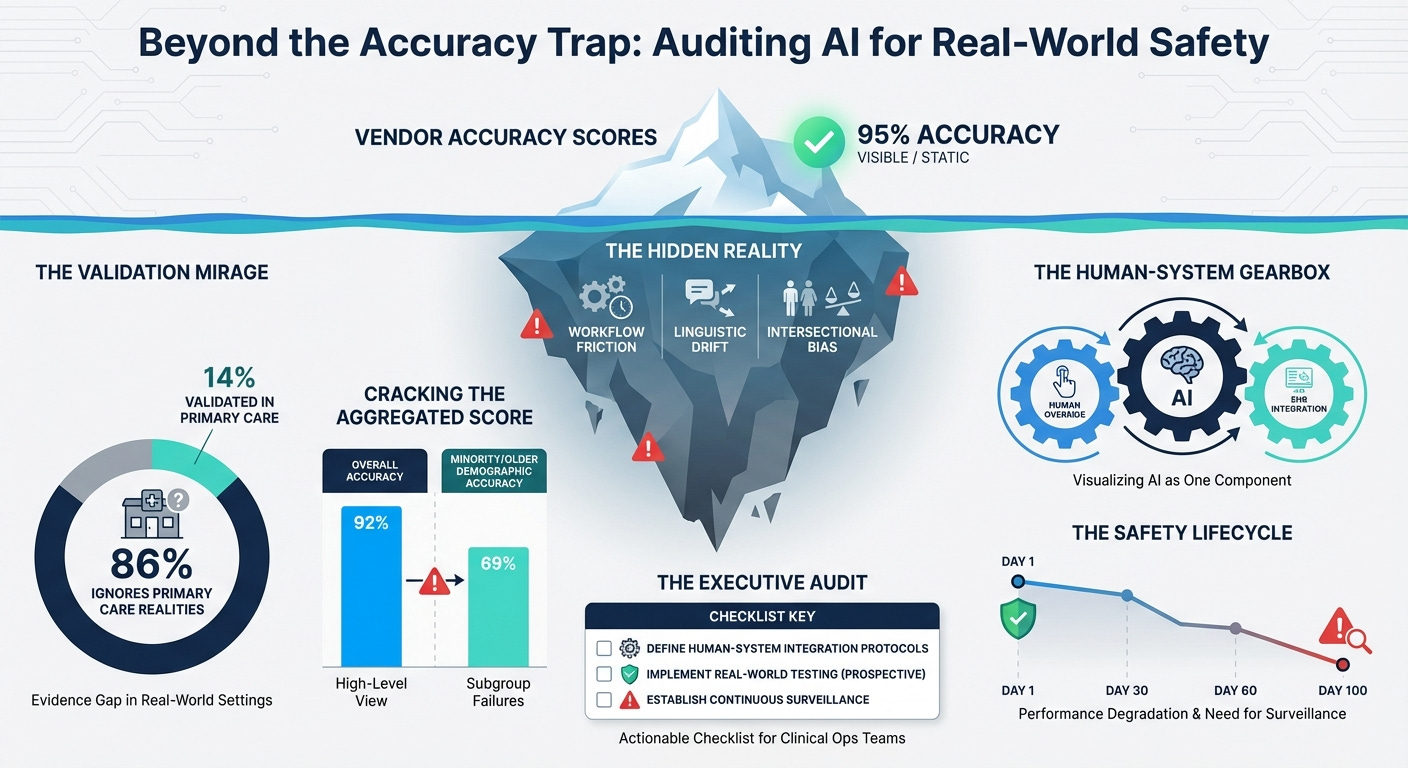

The Takeaway: Most AI triage is validated on retrospective or emergency data, ignoring real-world workflows. Worse, they lack “equity-stratified” reporting. If you don’t actively measure algorithmic fairness across intersecting demographics

3 Things To De-Risk Your Clinical AI Strategy

In order to achieve a scalable, trustworthy AI pipeline, you’re going to need a handful of things: sociotechnical governance, continuous equity auditing, and human workflow integration.

1. Design for “Sociotechnical” Trust

You must pair user interface warnings with rigorous sociotechnical governance and clinical override protocols.

The Harvard Business School study proves that humans trust AI too much when it evaluates outlier data it wasn’t trained on. However, a warning light on a dashboard is useless if the clinical staff is organizationally pressured to follow throughput metrics instead of their intuition. True calibrated trust requires “explainable AI” that provides the specific clinical drivers for an escalation, clear uncertainty indicators, and explicit “override required” prompts for edge cases. You must actively train your investigators to override the system when human judgment contradicts the machine’s confidence. Safety comes from organizational culture and human-AI collaboration, not just model accuracy.

2. Mandate Continuous “Post-Market” Equity Surveillance

You need to move past pre-purchase vendor checklists and mandate continuous “post-market surveillance” for equity.

The Cleveland Clinic rare disease study showed the massive upside of AI—jumping from 7.1% to 36.6% Black participant identification. But as the primary care triage paper highlights, an equitable model on day one can become a biased model by day 100 due to changes in case-mix, local linguistic variations, or privacy laws that blind systems to emerging biases. You must build continuous “drift detection” and equity dashboards into your clinical operations. Use frameworks like PROGRESS-Plus to continuously monitor real-world incident reporting and intersectional subgroup calibration (e.g., age x ethnicity) to ensure you aren’t widening disparities post-deployment.

3. Integrate Sensors Directly Into Human Workflows

You must stop bombarding patients with app notifications and start integrating sensor data directly into human clinical workflows.

The Parkinson’s wearable review revealed that we deploy sensors to watch patients deteriorate, but we struggle to close the loop at home. Attempting to close this loop by simply adding more gamification or push notifications drives massive attrition, as seen in the adolescent digital health review, which warns of “technological dependency” and technical fatigue. If your digital endpoint requires high technical maintenance from the patient, they will drop out. The loop must be closed by integrating wearable data directly into nurse-led follow-ups and clinical dashboards, allowing human care teams to intervene exactly when the data flags a deterioration. Minimize the patient’s technical friction by letting the clinician close the loop

PS...If you're enjoying Healthtech for Lifescience Leaders, please consider referring this edition to a friend.

And whenever you are ready, there are 2 ways I can help you:

The AI-Augmented Leader Email Course: Sign-up for my free 5-day email course on how to become an AI Augmented Leader in Lifesciences.

Strategic Roadmap Design: Translate your priorities across different parts of the organization into a coordinated and clear roadmap in 2026. Book time on my calendar to discuss this further.

March 20 - HealthTech Dose

March 20, 2026

This episode focuses entirely a clear, actionable roadmap for addressing the “Shadow AI” crisis in clinical operations. The mission is to shift from unmonitored, consumer-grade AI usage toward secure, integrated infrastructure that protects the entire R&D pipeline. To succeed this decade, executives must prioritize three immediate strategic mandates: Security (protecting proprietary IP from leaking into public chatbots), Compliance (meeting FDA requirements through rigorous data validation), and Empowerment (providing site coordinators with vetted tools that actually reduce burnout). The key strategic win lies in embracing AI not as a forbidden shadow tool, but as a secure wrapper for human expertise that maintains the audit trail without breaking the clinical workflow.

Key Takeaways:

Acknowledge the “Shadow AI” reality by recognizing that site coordinators are already using unvetted AI to manage administrative burdens, creating massive unmonitored risks.

Prevent Intellectual Property leakage by implementing secure AI wrappers that keep proprietary R&D strategies and patient data off third-party servers.

Mitigate regulatory and financial risk by ensuring all AI tools used at the site level are compliant, avoiding potential multi-million dollar trial invalidations by the FDA.

Adopt “System-Agnostic” security layers, such as blind signature technology, to provide privacy for large language models without forcing sites to learn complex new software.

Prioritize site-centric augmentation by integrating AI into existing workflows—like drafting patient emails and summarizing visit notes—to solve the “survival” crisis facing clinical coordinators.

Show Notes:

[0:00 - 1:30] R&D leaders are facing a “Shadow AI” crisis where clinical sites are using consumer AI tools without permission, threatening the security of the R&D pipeline.

[1:30 - 3:00] The root cause is “site fatigue”; overwhelmed coordinators use AI to translate eligibility criteria and draft emails just to keep their heads above water.

[3:00 - 4:30] Proprietary IP is at risk when investigators paste study brochures into public chatbots, effectively moving pharma-owned data onto third-party servers.

[4:30 - 6:00] Financial stakes are high; while a $50 million FDA penalty is currently a theoretical risk model, the threat of trial invalidation remains a “nightmare scenario” for mid-sized sponsors.

[6:00 - 7:30] A zero-tolerance ban is not the answer, as it drives Shadow AI further underground; sponsors must instead provide secure, guided alternatives.

[7:30 - 9:00] Secure infrastructure solutions, like the Stanford Open Anonymity Project’s “blind signatures,” allow AI to process data in a “digital envelope” without seeing sensitive content.

[9:00 - 10:30] Effective governance requires “secure wrappers” that store chat history locally on site hardware, maintaining the audit trail for future regulatory inspections.

[10:30 - End] The final strategic mandate: sponsors must adapt to the reality of site operations by deploying agile guardrails that accelerate drug development without compromising compliance.

Podcast generated with the help of Gemini